Beyond Spurious Correlations: A Practical Guide to Handling Compositional Bias in Biomedical Data Analysis

This article provides a comprehensive guide for researchers and drug development professionals on identifying and correcting for compositional data bias in correlation analyses.

Beyond Spurious Correlations: A Practical Guide to Handling Compositional Bias in Biomedical Data Analysis

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on identifying and correcting for compositional data bias in correlation analyses. Covering foundational concepts to advanced methodologies, it explains why standard correlation measures (like Pearson and Spearman) fail with relative data (e.g., microbiome abundances, proteomics, metabolomics) and introduces robust alternatives like proportionality metrics, log-ratio transformations, and Bayesian approaches. We detail step-by-step application workflows, troubleshooting common pitfalls, and validating results through simulation and benchmark studies. The guide synthesizes current best practices to ensure biological interpretations are driven by genuine associations, not mathematical artifacts of data closure.

The Hidden Pitfall: Why Compositional Data Breaks Standard Correlation and How to Spot It

Technical Support Center: Troubleshooting Compositional Data Analysis

Frequently Asked Questions (FAQs)

Q1: My correlation analysis between gene expression proportions yields spurious results. What is the fundamental issue? A1: The core issue is likely the "constant sum" constraint (e.g., all proportions in a sample sum to 1 or 100%). This closure induces negative bias in covariances, making standard correlation metrics (like Pearson) unreliable and non-interpretable.

Q2: How can I quickly identify if my dataset is compositional? A2: Check if each row of your data sums to the same total (e.g., 1, 100%, or a fixed library size in sequencing). If yes, it is compositional. The table below summarizes key characteristics.

Table 1: Characteristics of Compositional vs. Non-Compositional Data

| Feature | Compositional Data | Standard Multivariate Data |

|---|---|---|

| Sum Constraint | Each sample sums to a constant (e.g., 1). | No fixed sum per sample. |

| Information Carried | Relative information (ratios between parts). | Absolute information. |

| Covariance Structure | Artificially negative; non-invertible. | Unconstrained. |

| Appropriate Analysis | Log-ratio transformations (e.g., CLR, ILR). | Standard statistical methods. |

Q3: Why does applying a log transformation not fully solve the compositionality problem? A3: A simple log transform (e.g., log(x)) still operates on the original proportions which are constrained. Only log-ratio transformations (like CLR) break the constant sum constraint by encoding data relative to a reference, moving analysis into the real Euclidean space.

Troubleshooting Guides

Issue: Inflated False Positives in Feature Correlation Networks Symptoms: Dense correlation networks with many strong negative correlations when analyzing proportional data from metabolomics or microbiome studies. Diagnosis: This is a classic sign of compositional bias. The constant sum forces a "dumbing game" relationship: if one component increases, others must decrease on average, creating artificial negative correlations. Solution: Apply a Centered Log-Ratio (CLR) transformation prior to calculating correlations. Experimental Protocol: CLR Transformation for Correlation Analysis

- Input: Raw composition matrix

Xwithnsamples (rows) andDcomponents/parts (columns). - Handle Zeros: Replace any zero values with a sensible pseudo-count (e.g., using the

cmultReplfunction from the RzCompositionspackage ormultiplicative_replacementfrom Python'sscikit-bio). - Calculate Geometric Mean: For each sample

i, compute the geometric meang(x_i)of allDparts. - Transform: Compute the CLR for each part

jin samplei:clr(x_ij) = log( x_ij / g(x_i) ). - Output: A transformed matrix

Zof the same dimensions, now approximately free from the sum constraint. - Analysis: Calculate correlations (e.g., SparCC, Pearson on CLR) on matrix

Z. Interpret correlations as relative associations between components.

Issue: Unstable or Uninterpretable Coefficients in Regression with Proportions Symptoms: Regression model coefficients shift dramatically when adding or removing a variable from the compositional predictor set. Diagnosis: Multicollinearity caused by the redundancy in the closed data (one variable is determined by all others). Solution: Use Isometric Log-Ratio (ILR) transformation to create orthonormal coordinates before regression. Experimental Protocol: ILR Transformation for Regression Modeling

- Input: Raw composition matrix

X(n x D). - Sequential Binary Partition (SBP): Define an

(D-1) x Dsign matrix that encodes a hierarchical series of balances between groups of parts. (Use expert knowledge or a default sequential partition). - Calculate Balances: For each ILR coordinate

k(where k=1,...,D-1), compute:ilr_k = sqrt( (r_k * s_k) / (r_k + s_k) ) * log( (g(x_+)) / (g(x_-)) )wherer_kands_kare the number of parts in the+1and-1groups for balancek, andg(x_+)andg(x_-)are the geometric means of those respective part groups. - Output: A transformed matrix

Yof dimensionsn x (D-1). These coordinates are orthonormal in the Euclidean space. - Analysis: Use standard regression on

Y. Coefficients can be back-transformed to the original composition space for interpretation in terms of balances.

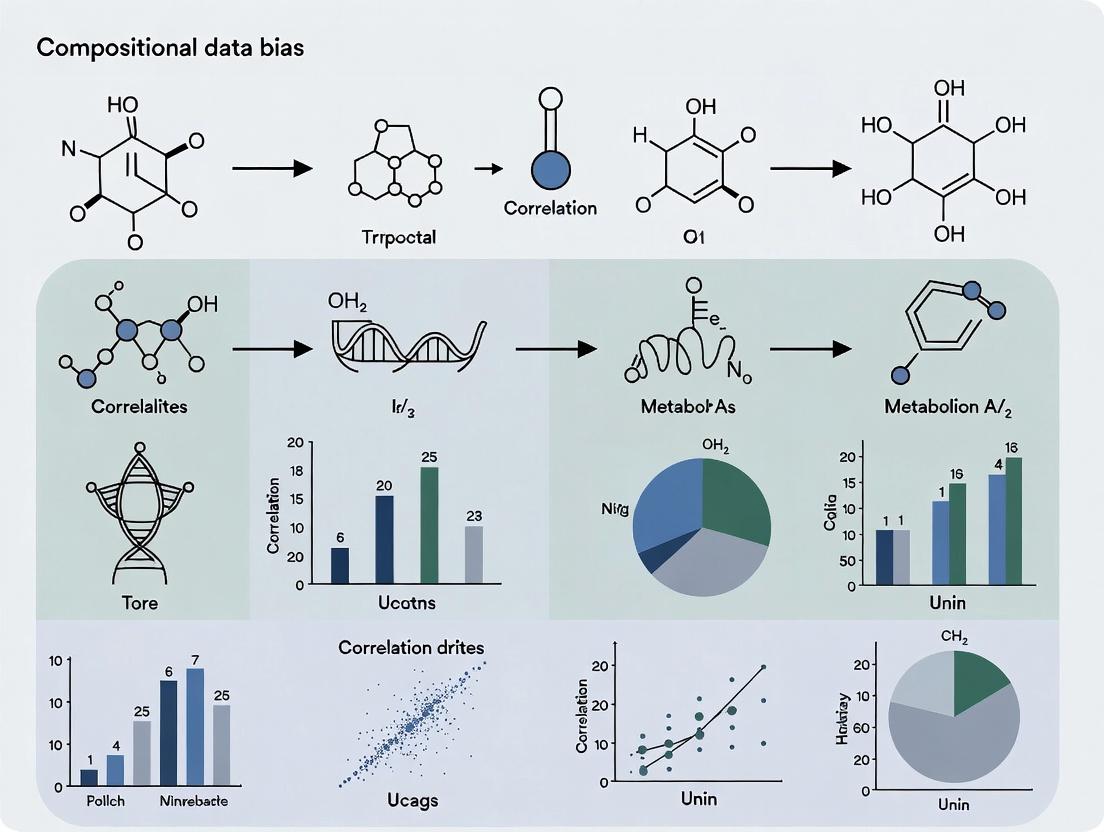

Visualizing the Problem and Solution

Title: The Compositional Data Analysis Problem and Solution Pathway

Title: Standard Workflow for Reliable Compositional Data Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Packages for Compositional Data Analysis

| Tool/Reagent | Function/Purpose | Example/Platform |

|---|---|---|

| CoDA R/Packages | Core statistical suite for CoDA. | compositions, robCompositions, zCompositions |

| SparCC | Estimates correlations from sparse compositional data (e.g., microbiome). | Python (SpiecEasi), Standalone script |

| ALDEx2 | Differential abundance for high-throughput sequencing data using CLR. | R/Bioconductor package |

| ANCOM-BC | Accounts for compositionality in differential abundance analysis. | R package |

| scikit-bio | Python toolkit for bioinformatics, includes CoDA methods. | Python library (skbio.stats.composition) |

| Pseudo-count Reagents | Handles zeros, which are undefined in log-ratios. | R: zCompositions::cmultRepl; Python: skbio.stats.composition.multiplicative_replacement |

| Balance SBP Designer | Aids in creating meaningful ILR coordinate balances. | R: robCompositions::findBalances; Expert knowledge |

| Log-ratio Friendly PCA | PCA on CLR-transformed data (or via compositions::princomp.acomp). |

R: compositions, stats |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: I am analyzing microbiome relative abundance data. My Pearson correlation between two species appears strong and significant (r=0.89, p<0.001), but my colleague says this is a spurious correlation due to the closed-sum (constant total) constraint. How can I diagnose if this is a real or illusory correlation?

A1: This is a classic symptom of compositional data bias. The constant total (e.g., 100% relative abundance, 1.0 proportion) induces negative bias and spurious correlations. To diagnose:

- Apply a log-ratio transformation (e.g., centered log-ratio, CLR) to your data to move it from the simplex to real space.

- Re-calculate the correlation on the transformed data.

- Compare results. A drastic change in magnitude or sign indicates spuriousness.

Diagnostic Table: Example Comparison

| Analysis Method | Correlation Coefficient (Species A vs. B) | P-value | Interpretation |

|---|---|---|---|

| Raw Relative Abundance (Pearson) | 0.89 | <0.001 | Potentially spurious |

| CLR-Transformed Data (Pearson) | 0.12 | 0.42 | Likely no true correlation |

Protocol 1: Diagnostic CLR Transformation

- Let

x= vector of D compositional parts (e.g., species abundances) for one sample. - Calculate the geometric mean:

g(x) = (x1 * x2 * ... * xD)^(1/D). - Apply CLR:

clr(x) = [ln(x1/g(x)), ln(x2/g(x)), ..., ln(xD/g(x))]. - Repeat for all samples to create a new transformed data matrix.

- Perform standard correlation analysis on the CLR-transformed matrix.

Q2: After using Aitchison's log-ratio methods, my covariance matrix is singular. What is the cause and solution?

A2: Singularity arises from the inherent sum constraint in compositional data, making one part linearly dependent on the others. This is expected.

Solution: Use subcompositional coherence. You can work in a lower-dimensional space by:

- Selecting a subcomposition of interest (e.g., D-1 parts).

- Or using a pivot coordinate transformation, which creates orthonormal coordinates tied to a specific sequential binary partition of the components.

Protocol 2: Building a Pivot Coordinate System

- For a D-part composition, create D sets of orthogonal coordinates.

- For the k-th pivot coordinate (focusing on part k):

- Numerator:

ln(x_k) - Denominator: Geometric mean of all other parts

x_{i≠k}. - Scaling: Apply a normalization factor based on the number of parts.

- Numerator:

- This yields D-1 independent, interpretable coordinates for multivariate analysis.

Q3: In drug development, how do I correctly correlate biomarker ratios (e.g., IL-6/IL-10) with clinical outcome scores without introducing ratio bias?

A3: Correlating pre-formed ratios is statistically problematic. The recommended method is to use log-ratio analysis.

Experimental Protocol:

- Do not calculate the ratio. Keep the two biomarker concentrations (IL-6, IL-10) as separate variables in your dataset.

- Create a single log-ratio variable:

ln(IL-6 / IL-10). This is equivalent toln(IL-6) - ln(IL-10). - Use this log-ratio variable in your correlation or regression model with the clinical outcome.

- Interpretation: The coefficient represents the change in outcome per unit change in the log-ratio (i.e., the relative balance of the two biomarkers).

Research Reagent Solutions & Essential Materials

| Item | Function in Compositional Data Analysis |

|---|---|

| Robust Compositional Dataset | A dataset with many samples and parts, ideally with some known zeroes (missing species/low abundance) to test replacement strategies. |

Zero-Replacement Library (e.g., zCompositions R package) |

Software tools to properly impute essential zeros (rounded zeros) in compositions before log-ratio transformation. |

CoDA Software Suite (compositions, robCompositions in R) |

Specialized packages for performing isometric log-ratio transformations, pivot coordinate analysis, and robust compositional statistics. |

| Geometric Mean Calculator | Fundamental for calculating the denominator in centered log-ratio (CLR) and additive log-ratio (ALR) transformations. |

Visualization Tool (Ternary plots, Balance dendrograms) |

For visualizing data in simplex space (ternary diagrams) and interpreting balances from sequential binary partitions. |

Key Methodological Diagrams

Title: Core Workflow for Correcting Compositional Data Bias

Title: Closure Constraint Inducing Spurious Correlation in a 3-Part Simplex

Title: Standard Protocol for Compositional Data Analysis

Troubleshooting Guides & FAQs

Q1: Our microbial relative abundance data shows strong negative correlations between two highly prevalent genera. Are these biologically real or a compositional data artifact?

A: This is a classic symptom of compositional bias. In closed-sum data (like 16S rRNA sequencing), an increase in one component forces an apparent decrease in others, inducing spurious negative correlations. The correlation may be misleading.

Troubleshooting Protocol:

- Apply a Compositional-Aware Transform: Use a centered log-ratio (CLR) transformation.

- Method: For each sample, take the natural log of each component (e.g., genus count), then subtract the geometric mean of all components in that sample.

- Formula:

CLR(x_i) = ln(x_i / g(x)), whereg(x)is the geometric mean. - This transforms data to Euclidean space, mitigating the closure effect.

- Use SparCC or proportionality metrics: These methods are designed for compositional data. SparCC (Sparse Correlations for Compositional data) iteratively estimates correlations based on log-ratios.

- Validation: Conduct a permutation test or use a cross-sectional validation on an independent cohort with different compositional profiles.

Q2: After running differential expression analysis on our RNA-seq data, we identified a key pathway. How can we be sure the results aren't confounded by differences in library size or cellular composition?

A: Library size differences are a major technical confounder. Cellular composition shifts (e.g., varying immune cell infiltration in tumor samples) can also drive misleading "differential expression" in bulk tissue data.

Troubleshooting Protocol:

- For Library Size:

- Use compositionally robust normalization methods like Trimmed Mean of M-values (TMM) or Relative Log Expression (RLE). Do not rely solely on counts per million (CPM) or reads per kilobase per million (RPKM) without prior effective library size normalization.

- For Cellular Composition:

- Deconvolution: Use tools like CIBERSORTx, MuSiC, or deconvolution methods in

limmato estimate cell-type proportions from bulk data using a reference signature matrix. - Statistical Adjustment: Include the estimated cell proportions as covariates in your linear model for differential expression (e.g., in

DESeq2orlimma). - Single-Cell Validation: If possible, validate key findings with a matched single-cell RNA-seq experiment to directly assess cell-type-specific expression.

- Deconvolution: Use tools like CIBERSORTx, MuSiC, or deconvolution methods in

Q3: In our clinical metabolomics study, we see strong correlations between certain serum metabolites and disease severity. How do we rule out that these are not driven by a latent variable like overall inflammation or renal function?

A: Unmeasured confounders are a pervasive source of misleading findings in clinical omics.

Troubleshooting Protocol:

- Measure and Adjust: Always collect and measure key clinical covariates (e.g., CRP for inflammation, creatinine clearance for renal function). Include them as adjustment variables in multivariate regression models.

- Sensitivity Analysis: Perform a E-value analysis.

- Method: The E-value quantifies the minimum strength of association an unmeasured confounder would need to have with both the exposure (metabolite) and the outcome (severity) to fully explain away the observed association.

- Calculate the E-value using published formulas or R packages (

EValue). A small E-value suggests fragility to confounding.

- Mendelian Randomization (MR): If genetic data is available, use MR as a method to test for potential causal relationships, which is more robust to confounding.

Table 1: Summary of Case Studies Highlighting Compositional & Confounding Bias

| Case Study Domain | Primary Finding (Before Correction) | Artifact Source | Corrective Method Applied | Post-Correction Result |

|---|---|---|---|---|

| Gut Microbiome (16S) | Strong negative correlation (-0.82) between Bacteroides and Prevotella | Compositional Closure (Spurious Correlation) | SparCC Analysis & CLR Transformation | Correlation reduced to non-significant (0.15, p=0.21) |

| Bulk Tumor RNA-seq | 150 genes differentially expressed (DE) in Tumor vs. Normal | Varying Lymphocyte Infiltration (Cell Composition) | Deconvolution + Prop. Adjustment in limma |

Only 47 genes remained DE (FDR<0.05) |

| Serum Metabolomics | Choline levels correlated with CVD risk (HR=1.9, p<0.001) | Confounding by Kidney Function (eGFR) | Multivariate Cox Model with eGFR covariate | Association attenuated (HR=1.3, p=0.09) |

| Proteomic Abundance | High correlation (r=0.91) between Protein A and Protein B | Batch Effect & Shared Missingness Pattern | Combat Batch Correction + MNAR Imputation | Correlation revised to moderate (r=0.45) |

Key Experimental Protocols

Protocol 1: Implementing CLR Transformation for Microbiome Data

- Input: Raw OTU or ASV count table.

- Pseudocount: Add a pseudocount of 1 (or proportion of minimum positive count) to all zeros.

- Calculate Geometric Mean: For each sample j, compute the geometric mean

g(x_j)of all components. - Transform: For each component i in sample j, compute

ln(x_ij / g(x_j)). - Downstream Analysis: Use the CLR-transformed matrix for Pearson correlation or standard multivariate tests.

Protocol 2: Differential Expression with Cell-Type Proportion Adjustment

- Estimate Proportions: Use a deconvolution tool (e.g., CIBERSORTx) with an appropriate leukocyte gene signature (LM22) or tissue-specific reference to estimate cell-type proportions for each bulk sample.

- Integrate into DESeq2:

- Integrate into limma-voom:

Diagrams

Title: Workflow for Addressing Compositional Bias in Omics Data

Title: Unmeasured Confounder Inducing Spurious Association

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Robust Analysis Against Compositional & Confounding Bias

| Item / Tool | Function & Application |

|---|---|

| Centered Log-Ratio (CLR) | Core mathematical transform to move compositional data from simplex to real space for standard stats. |

| SparCC / PRO | Algorithm specifically designed to estimate correlation networks from compositional data (e.g., microbiome). |

| CIBERSORTx | Computational deconvolution tool to estimate cell-type fractions from bulk tissue transcriptomic data. |

| E-Value Calculator | Sensitivity analysis metric to assess robustness of observed associations to potential unmeasured confounding. |

R/Bioconductor (compositions, zCompositions, limma) |

Essential software packages for implementing CLR, dealing with zeros, and covariate-adjusted modeling. |

| Reference Signature Matrices (e.g., LM22 for immune cells) | Required reference for deconvolution tools to quantify specific cell-type abundances in bulk mixtures. |

| Silicon Beads / Mock Communities (for microbiome) | Physical controls with known compositions to benchmark and validate bioinformatic pipelines for bias. |

| Pooled QC Samples (for metabolomics/proteomics) | Technical replicates injected throughout LC-MS run sequence to monitor and correct for batch drift. |

Troubleshooting Guides & FAQs

Q1: My PCA biplot shows all variables clustered tightly together at the origin, making interpretation impossible. What is wrong? A: This is a classic symptom of the constant-sum constraint inherent to compositional data (e.g., percentages, proportions). When all parts must sum to 100%, it induces spurious negative correlations. The solution is to apply a log-ratio transformation before PCA.

- Protocol: Use a centered log-ratio (CLR) transformation.

- Let

xbe a vector of D compositional parts (e.g., gene counts, mineral percentages). - Calculate the geometric mean of

x:g(x) = (∏ x_i)^(1/D). - Transform each component:

clr(x_i) = log( x_i / g(x) ). - Perform PCA on the CLR-transformed data matrix.

- Let

- Check: Ensure your data is closed (sums to a constant). If so, raw PCA is invalid.

Q2: After a CLR transformation, my PCA triplot (samples, variables, supplementary constraints) shows unstable, non-reproducible axes when I add new data. How do I fix this? A: Instability often arises from singular covariance matrices due to zeros in your dataset (common in microbiome or metabolomics data). The CLR transformation cannot handle zeros.

- Protocol: Implement a zero-imputation strategy.

- Identify zeros: Count zeros per component.

- Choose method:

- For few zeros (<5% per component): Use multiplicative replacement (e.g.,

zCompositionsR package). - For many zeros: Use a more advanced model-based imputation (e.g., Bayesian multiplicative replacement).

- For few zeros (<5% per component): Use multiplicative replacement (e.g.,

- Re-run CLR-PCA on the imputed dataset.

- Alternative: Use robust covariance estimators or consider Aitchison's pivot coordinates for a sub-compositional analysis.

Q3: In my triplot, the angles between variable arrows no longer represent correlations. What do they mean now? A: In a log-ratio PCA biplot/triplot, the cosine of the angle approximates the log-ratio variance between two components. An acute angle indicates a proportional relationship between the two parts; an obtuse angle indicates a substitutive relationship. This is a more meaningful measure for compositions than Pearson correlation.

- Diagnostic Table:

| Angle (approx.) | Cosine Value | Interpretation in Log-Ratio Space |

|---|---|---|

| ~0° | ~1 | Parts vary in direct proportion. |

| 90° | 0 | Log-ratio has maximal variance; parts are unrelated. |

| 180° | -1 | Parts are perfectly substitutive (one increases, the other decreases). |

Q4: How do I correctly project supplementary elements (e.g., experimental conditions, drug doses) onto my triplot without distorting the compositional structure? A: Supplementary elements must be projected passively so they do not influence the PCA solution derived from the compositional data.

- Protocol: Passive projection of supplementary variables.

- Calculate the PCA solution only from the CLR-transformed compositional matrix

X. - For a supplementary quantitative variable

z, center it to have mean zero. - Project

zonto the PCA space by regressingzon the principal component scores of the active samples:z_proj = scores * coeff, wherecoeffare regression coefficients. - Plot the projected direction as a supplementary arrow. Its length and direction show its relationship with the compositional PCA space.

- Calculate the PCA solution only from the CLR-transformed compositional matrix

Key Experimental & Analytical Protocols

Protocol 1: Validating Compositional Bias in Correlation Analysis

Objective: Demonstrate that raw correlations between compositional parts are biased.

- Simulate Data: Generate a neutral 3-part composition

A, B, Cfrom a Dirichlet distribution. - Calculate Raw Correlations: Compute Pearson correlation between

AandB. - Introduce a "Bath" Variable: Dilute the composition with a random non-informative fourth part

D(e.g., adding solvent or an unrelated variable). - Re-calculate: Compute Pearson correlation between

A/(sum(A,B,C,D))andB/(sum(A,B,C,D)). - Result: The correlation will artificially shift towards negativity, demonstrating the bias.

Protocol 2: Building a Diagnostic PCA Triplot for Compositional Data

Objective: Create a triplot to visualize sample structure, variable relationships, and external constraints.

- Preprocess: Apply CLR transformation (with appropriate zero-handling) to your

n x pcompositional data matrix. - Perform PCA: Center the CLR data (mean=0) and perform SVD. Extract scores (sample coordinates), loadings (variable coordinates), and variance explained.

- Scale for Biplot: Scale sample scores by singular values and variable loadings correspondingly to create a symmetrical biplot.

- Add Supplementary Data: Passively project external continuous variables (e.g., pH, dose) or categorical factors (e.g., treatment group centroids) onto the biplot space using regression or averaging.

- Visualize: Plot samples as points, compositional variables as arrows, and supplementary variables as distinct symbols/arrows.

Data Presentation

Table 1: Comparison of Correlation Measures for Simulated Compositional Data

| Part Pair | Pearson (Raw %) | Pearson (After Dilution) | Aitchison's Log-Ratio Variance | Correct Interpretation |

|---|---|---|---|---|

| A vs B | 0.15 | -0.42 | 0.85 | Near-neutral proportionality |

| A vs C | -0.68 | -0.82 | 2.15 | High substitutive relationship |

| B vs C | -0.55 | -0.71 | 1.92 | High substitutive relationship |

Data simulated from a Dirichlet distribution with parameters (8, 6, 2). Dilution introduced 30% random noise.

Mandatory Visualizations

Diagnostic Triplot Creation Workflow

Source of Compositional Correlation Bias

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Compositional Data Analysis |

|---|---|

| Log-Ratio Transformations (CLR, ALR, ILR) | Core mathematical operation to open closed data, allowing application of standard multivariate methods. |

Zero-Imputation Packages (zCompositions in R, scikit-bio in Python) |

Handle essential zeros in count data to enable log transformations. |

| Robust PCA Algorithms (ROBPCA) | Mitigate influence of outliers that are exaggerated in log-ratio space. |

| CoDaPack / robCompositions | Dedicated software suites for comprehensive compositional data analysis. |

| Simplex Visualization Tools (Ternary Diagrams) | Initial diagnostic plotting to view raw compositions in their natural 3-part space. |

The Toolbox for Valid Analysis: From Log-Ratios to Proportionality Measures

Technical Support Center: Troubleshooting Compositions

Troubleshooting Guides

Guide 1: Correlation Analysis Yields Spurious Results with Raw Compositional Data

- Problem: A researcher calculates Pearson correlations between components (e.g., mineral concentrations, gene relative abundances) and obtains strong negative correlations that may be statistical artifacts of the closed sum (e.g., 100% or 1) constraint.

- Diagnosis: This is a classic symptom of compositional data bias. The sample space of compositions is the simplex, not real Euclidean space, violating standard correlation assumptions.

- Solution: Apply the log-ratio paradigm. Transform the data using one of Aitchison's log-ratio methods (CLR, ILR, ALR) before performing correlation analysis.

- Protocol:

- Preprocessing: Handle zeros if present (e.g., using a multiplicative replacement strategy).

- Transformation: Choose a reference (see FAQs) and apply the log-ratio transform.

- Analysis: Conduct correlation analysis (e.g., Pearson, Spearman) on the transformed, open data.

- Back-Interpretation: Interpret results in the context of relative, not absolute, differences.

Guide 2: PCA Biplot Shows Distorted Distances Between Samples

- Problem: Principal Component Analysis (PCA) on raw compositional data produces a biplot where the distances between sample points do not reflect their true compositional differences.

- Diagnosis: PCA uses Euclidean distance, which is inappropriate for compositional data. This distorts the geometry of the sample space.

- Solution: Perform a log-ratio PCA. This involves CLR-transforming the data and then conducting a PCA on the covariance matrix of the CLR-transformed data (equivalent to using Aitchison distances).

- Protocol:

- Apply a CLR transformation to the dataset.

- Compute the covariance matrix of the CLR-transformed data.

- Perform eigenvalue decomposition on this covariance matrix.

- Plot samples and components in the principal coordinate space.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental principle of Aitchison's geometry that I must remember? A1: The fundamental principle is that the only relevant information in compositional data is contained in the ratios between components. The absolute magnitudes or the closure of the data are not informative for analysis. Therefore, all valid statistical methods must be scale-invariant (unchanged if the composition is multiplied by a constant) and sub-compositionally coherent (analysis of a subset of parts is consistent with the analysis of the full composition).

Q2: I have to choose a log-ratio transform. What are the core differences between CLR, ILR, and ALR? A2: The choice depends on your interpretational goal and downstream analysis. See the comparison table below.

Q3: How do I handle zeros in my composition before applying a log-ratio transformation? A3: True zeros (e.g., a mineral not present in a rock) are problematic as the log of zero is undefined. Common strategies include:

- Multiplicative Replacement: Replace zeros with a small positive value, redistributing the replaced mass proportionally from the non-zero components. Use dedicated tools (e.g.,

zCompositionsR package). - Using ILR: Specific ILR balances can be designed to separate components with zeros from those without.

- Sensitivity Analysis: Any analysis should include a check for sensitivity to the chosen replacement value.

Q4: After performing correlation analysis on ILR-transformed coordinates, how do I interpret the results in terms of my original components? A4: ILR coordinates (balances) represent specific, orthogonal contrasts between groups of parts. A correlation involving an ILR coordinate should be interpreted as a correlation with the log-ratio of the geometric means of the two groups of parts defined in that balance. You are not correlating single parts, but their aggregated relative behavior.

Table 1: Comparison of Log-Ratio Transformations for Correlation Analysis

| Feature | Centered Log-Ratio (CLR) | Isometric Log-Ratio (ILR) | Additive Log-Ratio (ALR) |

|---|---|---|---|

| Reference | Geometric mean of all parts. | A sequential binary partition (balance). | A single, chosen denominator part. |

| Coordinates | D-dimensional (leads to singular covariance). | (D-1)-dimensional, orthogonal. | (D-1)-dimensional, not orthogonal. |

| Covariance Use | Singular matrix; use for PCA/clustering. | Full-rank matrix; safe for all multivariate stats. | Full-rank but non-isometric; can distort distances. |

| Interpretability | Moderate (deviation from average composition). | High for individual balances, lower for system view. | Very direct for ratios against a fixed part. |

| Best For | PCA, distance-based methods (Aitchison distance). | Regression, hypothesis testing, correlation analysis. | Focused analysis on one key reference component. |

| Key Limitation | Covariance matrix is singular (not invertible). | Requires careful construction of the balance tree. | Results depend on choice of denominator; geometry is not isometric. |

Table 2: Example of Multiplicative Zero Replacement Impact (Simulated Data)

| Component | Sample A (Original) | Sample A (After 0.001 Replacement) | Log-Change |

|---|---|---|---|

| Part 1 | 0.500 | 0.4995 | -0.001 |

| Part 2 | 0.500 | 0.4995 | -0.001 |

| Part 3 | 0.000 | 0.0010 | +∞ |

| Total | 1.000 | 1.0000 |

Note: Demonstrates the minimal perturbation to non-zero parts and the critical importance of documenting the procedure.

Experimental Protocols

Protocol: Conducting Robust Correlation Analysis on Compositional Data (ILR-Based)

Objective: To identify significant correlations between microbial taxa (genus-level) and a continuous environmental variable (e.g., pH) while controlling for compositional bias.

Data Preparation:

- Input: A count table (OTU/ASV table) aggregated at the genus level.

- Normalization: Convert raw counts to compositions (relative abundances) by dividing each row by its row total (library size).

- Zero Handling: Apply multiplicative replacement (

cmultReplfunction from R'szCompositionspackage) with a detection limit of 0.001.

ILR Transformation:

- Construct a sequential binary partition (SBP) for your genera. For simplicity, start with a

philr::philr()default balance tree (based on phylogenetic structure) or a principal balances tree. - Apply the ILR transform using the

philr::philr()orcompositions::ilr()function with your SBP, creating an (n x (D-1)) matrix of balances.

- Construct a sequential binary partition (SBP) for your genera. For simplicity, start with a

Correlation Analysis:

- For each ILR coordinate (balance)

j, perform a linear regression:lm(ILR_coordinate[, j] ~ pH). - Correct p-values for multiple testing using the False Discovery Rate (FDR, e.g., Benjamini-Hochberg procedure).

- Identify balances significantly associated (FDR < 0.05) with pH.

- For each ILR coordinate (balance)

Interpretation:

- For each significant balance, inspect its SBP definition. Example: A balance

+1for Genera (A, B, C) vs-1for Genera (D, E). - A positive correlation with pH means the log-ratio

gm(A,B,C)/gm(D,E)increases with pH. - Report findings as relative changes between groups of taxa, not individual taxa.

- For each significant balance, inspect its SBP definition. Example: A balance

Visualizations

Title: Core Workflow for Compositional Data Analysis

Title: Choosing a Log-Ratio Transformation Path

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Compositional Data Analysis |

|---|---|

R compositions Package |

Core library for CLR, ILR, ALR transformations, and simplex-based geometry operations. |

R robCompositions Package |

Provides robust methods for imputation (impKNNa), outlier detection, and regression with compositions. |

R zCompositions Package |

Specialized toolkit for treating zeros (count multiplicitive replacement, cmultRepl). |

R philr Package |

Implements Phylogenetic ILR, constructing balances based on a phylogenetic tree for microbiome data. |

| CoDaPack Software | User-friendly, standalone software for applying log-ratio methods without programming. |

| Primer on Aitchison's Geometry | Conceptual understanding is the most critical "tool" for correct experimental design and interpretation. |

Technical Support Center

Troubleshooting Guides

Issue 1: High correlation values from compositional data despite no biological relationship.

- Symptoms: Strong Pearson/Spearman correlations are observed between two features (e.g., gene A, gene B) in sequencing datasets (RNA-seq, 16S). However, when the analysis is repeated with a spike-in control or a reference feature, the correlation disappears or reverses.

- Diagnosis: This is a classic sign of compositional bias (or "spurious correlation"). The correlation is driven by changes in the total sample sum (library size) affecting both features, not by a true biological link.

- Solution:

- Apply a proportionality metric. Calculate phi (φ), rho (ρ), or corR between Gene A and Gene B. These metrics are scale-invariant.

- Re-evaluate. A high proportionality value with a near-zero correlation suggests the co-dependence is likely compositional. A high value in both proportionality and correlation (on clr-transformed data) provides stronger evidence for a true biological relationship.

- Protocol: See "Proportionality Calculation Protocol" below.

Issue 2: Difficulty interpreting negative phi (φ) values.

- Symptoms: Users obtain negative φ values and are uncertain if they indicate negative association or lack of association.

- Diagnosis: φ ranges from 0 (perfect proportionality) to infinity (no proportionality). Negative values are not mathematically defined for the standard φ formula.

- Solution: Confirm the calculation. If using the var of the log-ratio, the result should be ≥0. If a "negative" appears, check for:

- Data Zeros: Use a sensible replacement (e.g., Bayesian multiplicative replacement, not simple zero imputation) before taking logarithms.

- Software Implementation: Ensure you are using the correct formula: φ(x,y) = var(log(x/y)) or its equivalent var(log(x) - log(y)).

Issue 3: Choosing between phi (φ), rho (ρ), and corR.

- Symptoms: User is unsure which proportionality metric to use for their specific dataset (e.g., metabolomics, microbiome).

- Diagnosis: Each metric has slightly different sensitivity and variance properties.

- Solution: Refer to the table below for guidance. For general use with moderate-to-high abundance features, rho is often recommended. For datasets with many low-abundance features, test the robustness of corR.

Frequently Asked Questions (FAQs)

Q1: Can I use proportionality on any type of data, or is it only for sequencing data? A1: Proportionality is designed for any relative or compositional data. This includes microbiome abundances, metabolomics concentrations, geochemical samples, and any dataset where the measured values are parts of a whole. It is not suitable for data with a meaningful absolute scale (e.g., height, blood pressure in standard units).

Q2: Do I need to transform my data before calculating proportionality measures?

A2: Yes, all standard proportionality metrics operate on log-ratio transformed data. The most common pre-processing step is the Centered Log-Ratio (CLR) transformation: clr(x) = log(x / g(x)), where g(x) is the geometric mean of all features in a sample. This transformation maps the data from the simplex to real space.

Q3: How do I statistically test if a proportionality value is significant? A3: Unlike correlation, there is no universal parametric test for proportionality. Significance is typically assessed using a permutation test: 1. Randomly permute the samples for one of the features (or both) many times (e.g., 1000-10,000 permutations). 2. Recalculate the proportionality metric each time to generate a null distribution. 3. Compare your observed metric to this null distribution to calculate an empirical p-value.

Q4: Are there R/Python packages available for calculating these metrics?

A4: Yes.

* R: The propr package is dedicated to calculating ρ and φ. The compositions package provides CLR transformations.

* Python: The scikit-bio library and PyCoDa package offer proportionality and compositional data analysis tools.

Data Presentation

Table 1: Comparison of Correlation and Proportionality Metrics for Compositional Data

| Metric | Range | Robust to Compositionality? | Key Interpretation | Formula (Simplified) |

|---|---|---|---|---|

| Pearson's r | [-1, 1] | No | Linear association on absolute scale. | Cov(x,y)/(σₓσᵧ) |

| Spearman's ρ | [-1, 1] | No | Monotonic rank association. | Pearson r on ranks. |

| phi (φ)* | [0, ∞) | Yes | Variance of pairwise log-ratio. Lower values = more proportional. | var(log(x / y)) |

| rho (ρ)*p | [-1, 1]* | Yes | Symmetric, based on variance of log-ratio. | 1 - (φ(x,y) / (var(clr(x)) + var(clr(y))) ) |

| corR | [-1, 1] | Yes | Correlation of CLR-transformed components. | cor(clr(x), clr(y)) |

Note: *ρ_p approximates the correlation of CLR-transformed data but is more robust for pairs involving low-abundance components.*

Experimental Protocols

Protocol: Proportionality Calculation Protocol (R Example)

Purpose: To detect robust, compositionally-bias-free associations between features (e.g., genes, taxa).

Reagents & Materials: See "Research Reagent Solutions" table.

Software: R (≥4.0.0), propr package, compositions package.

Procedure:

- Data Loading & Zero Handling:

CLR Transformation:

Calculate Proportionality Matrix:

Visualization & Validation:

- Plot a proportionality network or heatmap.

- Validate top pairs using domain knowledge or via permutation testing.

Protocol: Permutation Test for Proportionality Significance

Purpose: To generate empirical p-values for a proportionality measure.

Procedure:

- Calculate the observed proportionality metric (ρ_obs) for the feature pair of interest.

- For

iin 1:N(e.g., N=10000): a. Randomly shuffle the sample order of one of the feature's vectors. b. Recalculate the proportionality metric (ρ_perm[i]) using the shuffled data. - Calculate the empirical p-value:

p = (count of |ρ_perm[i]| >= |ρ_obs| + 1) / (N + 1) - Apply multiple testing correction (e.g., Benjamini-Hochberg) across all tested pairs.

Mandatory Visualization

Title: Decision Flow: Correlation vs. Proportionality for Compositional Data

Title: Experimental Workflow for Proportionality Analysis

The Scientist's Toolkit

Table 2: Research Reagent & Computational Solutions for Proportionality Analysis

| Item | Function in Analysis | Example/Note |

|---|---|---|

| Zero-Replacement Tool | Handles zeros in compositional data prior to log-ratio transforms. | R: zCompositions::cmultRepl() (Bayesian multiplicative). Python: scikit-bio zero replacement methods. |

| CLR Transformation Library | Performs the Centered Log-Ratio transformation, a prerequisite for proportionality. | R: compositions::clr(). Python: skbio.stats.composition.clr(). |

| Proportionality Calculator | Efficiently computes φ, ρ, or corR matrices for large datasets. | R: propr::propr(). Python: Custom function or PyCoDa. |

| Permutation Test Script | Generates null distributions and empirical p-values for proportionality metrics. | Custom script in R/Python (see protocol above). |

| Network Visualization Suite | Visualizes significant proportional pairs as an interpretable network. | R: igraph or cytoscape. Python: networkx, Cytoscape via py4cytoscape. |

| Benchmark Dataset | Validates the analysis pipeline using data with known associations. | Synthetic compositional data with planted proportional pairs. Public miRNA/mRNA paired datasets. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: After running DESeq2 on my ASV table, I get an error: "every gene contains at least one zero, cannot compute log geometric means." How do I fix this? A: This is common with sparse microbiome data. Use a custom geometric mean function that handles zeros.

Q2: My SparCC correlation network shows spurious strong correlations between low-abundance taxa. Is this expected? A: Yes. SparCC, while designed for compositionality, can be unstable with rare taxa. Apply a prevalence (e.g., >10% samples) and abundance (e.g., >0.01% mean relative abundance) filter before analysis.

Q3: When I convert my count data to CLR (Centered Log-Ratio) for CCA, I get infinite values. What's wrong?

A: CLR transformation requires all values to be >0. You must replace zeros first. Use a multiplicative replacement strategy (e.g., from the zCompositions R package or scikit-bio in Python) rather than a simple pseudocount.

Q4: I am getting drastically different results between Pearson correlation on CLR data and Spearman correlation on raw counts. Which should I trust for my thesis on compositional bias?

A: Neither alone is fully trustworthy. CLR with Pearson is a better starting point for addressing compositionality, but confirm key findings with a method explicitly designed for compositional correlation like SparCC or a proportionality measure (e.g., propr R package). Validate with context-independent data if available.

Q5: My PCoA plot shows a strong "horseshoe" effect. Does this invalidate my beta-diversity analysis? A: The horseshoe effect is an artifact of nonlinear ecological gradients in Euclidean space. It does not invalidate the analysis but suggests you should use a distance metric more robust to this (e.g., Bray-Curtis, UniFrac) and an ordination method like NMDS for visualization.

Table 1: Comparison of Correlation Methods for Compositional Data

| Method | Language/Package | Handles Compositionality? | Key Assumption | Suitable for Niche Analysis? |

|---|---|---|---|---|

| Pearson (on CLR) | R/base, Python/scipy | Partial (via CLR) | Multivariate normal | Moderate |

| SparCC | Python/SparCC, R/SpiecEasi | Yes | Data is sparse | Yes |

| Proportionality (ρp) | R/propr | Yes | Log-ratio variance | Yes |

| MIC (Max. Info. Coeff.) | R/minerva, Python/minepy | No (non-parametric) | General dependence | Low (computational) |

| Spearman | R/base, Python/scipy | No | Monotonic relationship | No |

Table 2: Recommended Pre-processing Filters for 16S Data

| Filter Type | Typical Threshold | Purpose | R Code Snippet |

|---|---|---|---|

| Prevalence | Keep taxa in >10-20% of samples | Reduce sparsity & noise | phyloseq::filter_taxa(function(x) sum(x > 0) > (0.1 * length(x)), TRUE) |

| Abundance | Mean Relative Abundance >0.01% | Remove very low-abundance noise | phyloseq::filter_taxa(function(x) mean(x) > 1e-4, TRUE) |

| Library Size | >1,000 reads per sample | Ensure adequate sampling | phyloseq::prune_samples(sample_sums(physeq) > 1000, physeq) |

Experimental Protocols

Protocol 1: Building a Correlation Network with Compositionally-Aware Methods

- Input: Filtered ASV/OTU count table (QIIME2 output, BIOM file, or simple TSV).

- Pre-processing: Apply prevalence and abundance filters (Table 2). Optional: Subset to top N variable taxa.

- Zero Handling: Perform multiplicative replacement using the CZM (Bayesian-multiplicative replacement) method.

- Correlation Calculation: Apply the SparCC algorithm (100 bootstraps recommended).

- Network Inference: Generate a correlation matrix, apply a significance threshold (p < 0.01), and a correlation strength threshold (e.g., |r| > 0.3).

- Visualization & Analysis: Export the adjacency matrix to Cytoscape or use

igraphin R/Python for network properties (modularity, degree).

Protocol 2: Differential Abundance Analysis Within a Thesis on Compositional Bias

- Rationale: Standard tests (t-test, Wilcoxon) on relative abundances are invalid. Use a compositionally-aware model.

- Method: ANCOM-BC2 (in R

ANCOMBCpackage) or ALDEx2 with careful interpretation. - Procedure:

a. Load raw count table and metadata into a

phyloseqobject (R). b. Run ANCOM-BC2, specifying the fixed effect (e.g., Disease vs Healthy). c. Apply the false discovery rate (FDR) correction (Benjamini-Hochberg). d. Extract taxa withq_val< 0.05 andlog2FC> 1 or < -1. e. Validate key hits by cross-checking with results from a second method like DESeq2 (with a proper size factor for microbiome data) or a zero-inflated negative binomial model (e.g.,glmmTMB).

Visualizations

Microbiome Analysis with Compositional Bias Focus

Impact of Compositional Bias on Correlation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Compositional Microbiome Analysis

| Item | Function | Example (R/Python) |

|---|---|---|

| Compositional Data Library | Core math for log-ratio analysis | compositions (R), scikit-bio (Python) |

| Zero Replacement Tool | Handles zeros before log-transform | zCompositions::cmultRepl() (R) |

| Compositional Correlation | Calculates correlations for comp. data | SpiecEasi::sparcc() (R), SparCC.py (Python) |

| Differential Abundance | Statistically tests for diff. abundance | ANCOMBC::ancombc2() (R), songbird (Python) |

| Network Visualization | Visualizes inferred correlation networks | igraph (R/Python), Cytoscape (GUI) |

| Workflow Framework | Integrates analysis steps reproducibly | phyloseq (R), QIIME2 (CLI), snakemake (Python) |

Troubleshooting Guides & FAQs

Q1: After applying a zero-replacement method (e.g., CZM), my correlation matrix between compositional parts is no longer positive definite. What went wrong? A: This is common when the replacement value is too large relative to the non-zero data, distorting the covariance structure. Verify the imputed value (often a small fraction like 2/3 of the detection limit). Use a Bayesian-multiplicative replacement which preserves the covariance structure better than simple additive replacement. Ensure the replacement is applied to the entire dataset cohesively, not column-by-column.

Q2: My high-dimensional compositional dataset (e.g., microbiome OTUs) is extremely sparse (>90% zeros). Which correlation metric should I use? A: Standard Pearson or Spearman correlation on raw or transformed data will be heavily biased. Proceed as follows:

- Pre-processing: Use a Bayesian-multiplicative zero-handling method designed for sparsity.

- Choice of Metric: Employ proportionality metrics (e.g., ρp from SparCC) or robust compositional correlations like the variation-based log-ratio correlation. These are more appropriate for sparse compositional data.

- Validation: Follow the protocol below for validating correlation structures in sparse settings.

Q3: When I fit a Bayesian Compositional Regression (BCR) model, the MCMC chains do not converge. How can I diagnose and fix this? A: Non-convergence often stems from poorly specified priors or highly collinear predictors in the composition.

- Diagnose: Check trace plots and Gelman-Rubin statistics (R-hat > 1.05 indicates issues).

- Solution A: Use hierarchical, regularizing priors (e.g., horseshoe prior) on the regression coefficients to handle multicollinearity.

- Solution B: Re-parameterize the model using an isometric log-ratio (ILR) transformation to create orthogonal predictors.

- Solution C: Increase the number of warm-up/adaptation iterations and thin the chains.

Q4: My centered log-ratio (CLR) transformation fails due to zeros in every sample. What are my options? A: The CLR requires no zeros in any component across the dataset. Your options are:

- Aggregate: Aggregate low-abundance components into a single "Other" category.

- Impute: Use a model-based imputation (e.g., zCompositions R package's

cmultReplorlrEM) before CLR. - Alternative Transform: Use an ILR transformation on a basis that ignores or amalgamates the problematic components.

Q5: How do I validate that my chosen correlation method is not producing spurious results due to compositionality? A: Implement a simulation-based validation protocol (see Experimental Protocol 1 below).

Experimental Protocols

Protocol 1: Validating Correlation Methods on Sparse Compositional Data

Objective: To assess the false positive rate and accuracy of a correlation metric under known, sparse compositional data structures.

- Simulate Ground Truth: Using the

compositionsorrobCompositionsR package, simulate a baseline compositionXwith a known correlation structureΣbetween a subset of parts. - Induce Sparsity: Randomly replace a defined percentage (e.g., 80%, 90%) of values in

Xwith zeros, mimicking different zero-generation mechanisms (e.g., missing not at random). - Apply Methods: Process the sparse dataset

X_zerowith:- Method A: Simple zero-replacement followed by Pearson correlation on CLR data.

- Method B: Bayesian-multiplicative replacement followed by proportionality.

- Method C: Direct use of a SparCC-type algorithm.

- Recover Correlation: Calculate the correlation matrix for each method.

- Evaluate: Compare recovered matrices to

Σusing mean squared error (MSE) and compute the false discovery rate (FDR) for non-zero correlations.

Protocol 2: Fitting a Bayesian Compositional Regression with Regularizing Priors

Objective: To model a continuous outcome Y as a function of high-dimensional, sparse compositional predictors X, while preventing overfitting.

- Pre-process Data: Apply a Bayesian-multiplicative zero replacement (e.g.,

bCodaR package). - Transform Data: Perform an ILR transformation on the imputed data to obtain orthogonal coordinates

Z. - Specify Model: In a probabilistic programming language (e.g., Stan, PyMC3), specify:

Y ~ Normal(α + Z * β, σ)whereβis assigned a regularizing prior (e.g.,β ~ horseshoe(df, scale)). - Sample: Run Hamiltonian Monte Carlo (HMC) sampling with 4 chains, 2000 warm-up iterations, and 2000 post-warm-up iterations per chain.

- Diagnose: Check R-hat statistics, effective sample size (ESS), and trace plots.

- Interpret: Transform the posterior distribution of

βback to the CLR space for interpretation of original components.

Data Presentation

Table 1: Comparison of Zero-Handling Methods for Compositional Correlation Analysis

| Method | Principle | Handles MNAR? | Preserves Covariance? | Recommended Use Case |

|---|---|---|---|---|

| Simple Replacement | Replace zeros with fixed small value | No | No | Exploratory analysis, low zero percentage |

| Multiplicative Replacement (KM, EM) | Probabilistic imputation via Dirichlet | Partial | Better | General purpose, <50% zeros |

| Bayesian Multiplicative | Model zeros as count below a limit | Yes | Yes | High-throughput data, MNAR suspected |

| Coda-lasso | Uses penalized regression for imputation | Yes | Yes | Predictive modeling, variable selection |

Table 2: Simulation Results: FDR of Correlation Methods at 95% Sparsity

| Correlation Method | False Discovery Rate (Mean ± SD) | Mean Squared Error |

|---|---|---|

| Pearson on CLR (simple imp.) | 0.38 ± 0.12 | 0.45 |

| Spearman on RA (no imp.) | 0.41 ± 0.11 | 0.51 |

| SparCC | 0.09 ± 0.05 | 0.11 |

| Proportionality (ρp) | 0.11 ± 0.06 | 0.14 |

| BCR-derived correlation | 0.07 ± 0.04 | 0.09 |

Mandatory Visualization

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Reagent | Function in Compositional Analysis |

|---|---|

R Package: compositions / robCompositions |

Core suite for ILR/CLR transforms, Aitchison geometry, and robust covariance estimation. |

R Package: zCompositions |

Specialized library for handling zeros (cmultRepl, lrEM, lrDA methods) in compositional data. |

R/Stan Package: brmcoda |

Fits Bayesian regression models with compositional predictors, handling zeroes and providing interpretable outputs. |

Python Library: skbio.stats.composition |

Provides CLR, ILR transforms, and basic zero imputation for integration into Python ML pipelines. |

Software: SpiecEasi |

Infers microbial ecological networks from sparse compositional (OTU) data using graphical lasso. |

Benchmark Dataset: GlobalPatterns (phyloseq) |

A standard, publicly available sparse microbiome dataset for method testing and validation. |

Solving Real-World Challenges: Zeros, Sparsity, and Reference Selection

Troubleshooting Guides & FAQs

Q1: After applying a pseudo-count to my compositional microbiome dataset, my log-ratio correlations became excessively strong and likely spurious. What went wrong? A: This is a common symptom of using an arbitrary, non-compositional pseudo-count (e.g., adding 1 to all counts). This disproportionately impacts low-abundance features, distorting the covariance structure. Solution: Use a Bayesian-multiplicative replacement method like the Count Zero Multiplicative (CZM) algorithm, which preserves the relative structure of the non-zero data. The imputed value for a zero in component j is proportional to the chosen imputation parameter and the feature's prevalence in the sample.

Q2: When performing center log-ratio (CLR) transformation for a differential abundance analysis, some zeros remain even after imputation, causing errors. How do I resolve this? A: This indicates the imputation threshold was set too low. The CLR requires all values to be positive. Solution: Re-impute with a higher imputation parameter (δ). A practical guideline is to set δ just above the detection limit for your sequencing run (e.g., 0.5 times the minimum observed non-zero count). Ensure the final imputed dataset contains no zeros before CLR transformation.

Q3: My chosen imputation method (e.g., k-nearest neighbors) works on the raw counts, but after log-ratio transformation, the data fails distributional tests for downstream methods like ANCOM-BC. A: Imputation should be performed with the compositional nature of the data in mind. Imputing on raw counts before normalization ignores the constant-sum constraint. Solution: Perform imputation on the compositions (i.e., on relative abundances or after a total sum scaling), not the absolute counts. This maintains the simplex space of the data.

Q4: Does the choice of log-ratio (CLR vs. ALR) affect the stability of results post-imputation? A: Yes. ALR (additive log-ratio, using a reference feature) is sensitive to imputation of the reference feature. If the reference feature contains imputed values, all ratios become unstable. CLR is generally more robust as it uses the geometric mean of all parts as the denominator. Recommendation: If using ALR, manually select a stable, high-abundance reference feature confirmed to have no zeros, or use a robust CLR approach.

Q5: How can I validate that my imputation strategy hasn't introduced significant bias in my correlation network analysis? A: Implement a sensitivity analysis. Create multiple imputed datasets using a range of justifiable imputation parameters (δ) or methods. Run your correlation analysis (e.g., SparCC, Propr) on each. Validation Table:

| Imputation Method | Parameter (δ) | % of Zeros Treated | Mean Correlation Shift | Key Edge Stability |

|---|---|---|---|---|

| Simple Additive | 1 count | 100% | High (+0.25) | Low (40%) |

| Bayesian Multiplicative | 0.5 | 100% | Moderate (+0.12) | Medium (65%) |

| Bayesian Multiplicative | 0.01 | 90% | Low (+0.05) | High (85%) |

| k-NN on Compositions | k=5 | 95% | Variable | Medium (60%) |

Stable, biologically plausible correlations across a range of parameters increase confidence.

Experimental Protocols

Protocol 1: Evaluating Imputation Impact on Correlation Recovery Objective: To assess how different zero-handling strategies affect the accuracy of reconstructed correlation networks from compositional data.

- Synthetic Data Generation: Simulate a ground-truth microbial count matrix with known covariance structure using the

SPARSimorcompositionsR package. Introduce zeros via a missing-at-random or left-censoring (below detection) mechanism. - Imputation Application: Apply 3-4 strategies to the zero-laden matrix:

- Simple pseudo-count (min observed non-zero / 2).

- Bayesian-multiplicative replacement (

zCompositions::cmultRepl). - Random Forest imputation (

missForeston CLR-preprocessed data). - No imputation (zero removal).

- Transformation & Analysis: Transform all datasets using CLR. Calculate all pairwise Pearson/Spearman correlations.

- Benchmarking: Compare the correlation matrices to the ground-truth matrix using Root Mean Square Error (RMSE) and precision/recall for recovering top-positive and top-negative correlations.

Protocol 2: Sensitivity Analysis for Pseudo-count Magnitude in Differential Abundance Objective: To determine the robustness of log-ratio differential abundance findings to the choice of pseudo-count.

- Parameter Sweep: Define a sequence of pseudo-counts (δ) from 0.1 to 1.0 times the minimum positive count in your real experimental dataset.

- Iterative Processing: For each δ, add the pseudo-count to the raw counts, apply Total Sum Scaling (TSS) normalization, and perform a CLR transformation.

- Statistical Testing: Run a standard linear model (e.g.,

limma) on each CLR-transformed dataset to test for condition differences. - Result Integration: Track the p-value and effect size for a set of candidate features across the δ spectrum. Features whose significance (p < 0.05) flips across the sweep are considered unstable and require cautious interpretation.

Visualizations

Title: Decision Workflow for Zero Handling in Log-Ratio Analysis

Title: Imputation Method Impact on Correlation Structure

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Software | Function in Zero Problem Context |

|---|---|

R Package: zCompositions |

Provides Bayesian-multiplicative methods (CZM, GBZM, LR) specifically designed for imputing zeros in compositional count data. |

R Package: robCompositions |

Offers k-NN and model-based imputation (impKNNa) that respects the compositional geometry of the data. |

R Package: CoDaSeq / microbiome |

Contains utilities for CLR transformation and zero-aware exploratory data analysis. |

R Package: propr / SpiecEasi |

Implements (sparse) correlation measures (e.g., ρp, CCC) for compositional data that can be more robust to residual zero effects. |

Python Library: scCODA |

A Bayesian model for differential abundance testing that explicitly includes a zero-inflated component, reducing reliance on prior imputation. |

Synthetic Data Tools (SPARSim, compcodeR) |

Generate realistic simulated compositional datasets with known properties to benchmark imputation and analysis pipelines. |

| Sensitivity Analysis Script | A custom workflow (as per Protocol 2) to test result stability across a range of imputation parameters, essential for rigorous reporting. |

Troubleshooting Guides & FAQs

Q1: What are the primary symptoms of an unstable or dominant reference component in relative abundance data? A1: Key symptoms include:

- Spurious Correlations: Detection of strong positive or negative correlations between components that are biologically implausible.

- Subcompositional Incoherence: Correlation patterns change dramatically when a different subset of components (e.g., a different panel of biomarkers) is analyzed.

- Variance Distortion: One component exhibits artificially high or low variance, skewing the entire correlation structure.

- Non-Reproducibility: Results fail to replicate when the experiment is repeated or when a different normalization or reference is applied.

Q2: How can I test if my chosen reference is causing bias in my correlation analysis? A2: Perform a Reference Sensitivity Analysis using the following protocol:

- Log-ratio Transformation: For your dataset with D components, create a set of candidate reference components (e.g., a presumed stable housekeeping gene, a geometric mean of several components, or a total sum).

- Iterative Re-calculation: Recalculate all pairwise log-ratio correlations (e.g., using Aitchison's centered log-ratio or a specific pairwise log-ratio) using each candidate reference.

- Correlation Matrix Comparison: Quantify the divergence between the resulting correlation matrices using a metric like the Frobenius norm or Procrustes correlation.

- Stability Assessment: The reference that produces the most stable (least variable) correlation matrix across biologically similar sample groups is the least dominant. High variability indicates reference dominance or instability.

Q3: What are the best practices for selecting a reference in microbiome or metabolomics correlation studies? A3: Best practices are summarized below:

| Practice | Description | Rationale |

|---|---|---|

| Use a Multi-Component Reference | Employ the geometric mean of a carefully chosen set of stable components (e.g., housekeeping genes, ubiquitous metabolites). | Dilutes the influence of any single, potentially variable component, reducing dominance risk. |

| Avoid Rare or Abundant Components | Do not use components with very low prevalence (many zeros) or extremely high abundance. | Rare components introduce zeros in log-ratios; abundant components can dominate the ratio. |

| Conduct Sensitivity Analysis | (As detailed in FAQ #2 above). | Empirically demonstrates the robustness (or lack thereof) of your conclusions to reference choice. |

| Consider Compositional Methods | Use methods built for compositional data (e.g., SparCC, proportionality methods like rho/phi) that do not rely on a single reference. | Avoids the reference selection problem entirely by using a compositionally coherent approach. |

Key Experimental Protocol: Reference Sensitivity Analysis for Correlation Stability

Objective: To empirically determine the impact of reference component choice on inferred correlation networks in compositional data (e.g., 16S rRNA gene sequencing, LC-MS metabolomics).

Materials: A compositional count or abundance matrix (samples x features), pre-processed (low-count filtering, no normalization).

Procedure:

- Define Reference Set: List R candidate references. These could be:

- Single features hypothesized to be stable.

- The geometric mean of features (creating a synthetic reference).

- The total sum (simple total count normalization).

- CLR Transformation & Correlation:

- For each candidate reference r in R:

- Calculate the centered log-ratio (CLR) for each feature i in sample k:

CLR_ik = log(x_ik / g(x_k)), whereg(x_k)is the geometric mean of all features in sample k`. *Implementation Note: When simulating a single reference r, temporarily treat it as the geometric mean. - Compute the Pearson correlation matrix

C_ron the CLR-transformed data across all samples.

- Calculate the centered log-ratio (CLR) for each feature i in sample k:

- For each candidate reference r in R:

- Stability Metric Calculation:

- Calculate the pairwise dissimilarity between all correlation matrices

C_rusing the Frobenius norm of their difference:|| C_a - C_b ||_F. - The reference yielding the lowest average dissimilarity to all other

C_rmatrices is considered the most stable.

- Calculate the pairwise dissimilarity between all correlation matrices

- Interpretation: A high average dissimilarity for a reference indicates that correlation results are highly dependent on that choice, signaling instability or dominance. Results using such a reference should be treated with caution.

Research Reagent Solutions Toolkit

| Item | Function in Context |

|---|---|

| Synthetic Microbial Community Standards (e.g., ZymoBIOMICS) | Provides a known, stable compositional ground truth for validating reference choices and correlation methods. |

| Stable Isotope-Labeled Internal Standards (for Metabolomics) | Acts as an ideal, invariant reference component for mass spectrometry-based data, correcting for technical variation. |

| Digital PCR (dPCR) Absolute Quantification Kits | Enables absolute quantification of a subset of targets (e.g., 16S rRNA gene copies) to validate relative abundance patterns. |

| Spike-in Control (e.g., External RNA Controls Consortium - ERCC) | Non-biological, known-concentration spikes added pre-extraction to track technical bias and assess compositionality. |

| Bioinformatic Tools (SparCC, SECOM, propr, CoDa packages in R) | Software implementations designed specifically for correlation analysis in compositional data, minimizing reference bias. |

Visualizations

Diagram 1: Impact of Dominant Reference on Log-Ratios

Diagram 2: Reference Sensitivity Analysis Workflow

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My high-dimensional compositional dataset (e.g., 16S rRNA, metabolomics) yields a correlation matrix that is singular or nearly singular, preventing inversion for partial correlation. What is the immediate diagnostic and solution?

A: This is a classic symptom of the "p >> n" problem, combined with compositional constraints. The correlation matrix is rank-deficient.

- Diagnostic: Calculate the condition number of your correlation matrix. A very high number (> 10^9) confirms ill-conditioning.

- Primary Solution: Apply L2 Regularization (Ridge). Add a small positive constant (λ) to the diagonal of the correlation matrix before inversion:

C_ridge = C + λI. This stabilizes the inverse. - Protocol:

- Standardize your CLR-transformed data.

- Compute the Pearson correlation matrix

C. - Calculate the condition number of

C. - Choose a λ value (e.g., 0.1, 0.01) and compute

C_ridge. - Invert

C_ridgeto obtain a stabilized partial correlation matrix. - Use cross-validation to select the optimal λ that minimizes the reconstruction error of the covariance matrix.

Q2: After applying regularization, my network remains overly dense with many spurious weak edges believed to be false positives from compositional noise. How can I filter these effectively?

A: Regularization stabilizes but does not inherently induce sparsity. A two-step Regularization + Thresholding approach is recommended.

- Solution: Apply Adaptive Thresholding to the regularized partial correlation matrix.

- Protocol:

- Obtain your regularized partial correlation matrix

P_ridge(from Q1). - Compute the distribution of all absolute partial correlation values.

- Set a threshold based on the empirical null distribution. Common methods:

- Hard Thresholding: Retain edges where

|P_ij| > threshold. The threshold can be defined as the value at the 95th percentile of the null distribution generated via permutation testing. - Soft Thresholding (for scale-free networks): Apply the

sign(P_ij) * (|P_ij| - threshold)^+function. This gradually shrinks weak edges to zero.

- Hard Thresholding: Retain edges where

- Validate the resulting sparse network using stability selection (see Q3).

- Obtain your regularized partial correlation matrix

Q3: How do I validate that my chosen regularization (λ) and filtering threshold (τ) parameters are not arbitrary and produce a stable, reproducible network?

A: Implement Stability Selection combined with Subsampling.

- Protocol:

- Perform

Bsubsamples (e.g., B=100) of your data, each drawing 80% of samples without replacement. - For each subsample

b, apply your full pipeline: CLR transform, compute correlation, apply ridge regularization with your λ, and apply your threshold τ. - For each possible edge (i, j) in the network, compute its selection probability:

Π_{ij} = (1/B) * ∑_{b=1}^B I(edge_{ij} exists in subsample b). - Retain only edges with a selection probability above a cutoff (e.g.,

Π_{ij} > 0.8). This yields a consensus, stable network robust to small data perturbations.

- Perform

Q4: For ultra-sparse high-dimensional data (many zeros), standard correlation metrics fail. What are the robust alternatives within a compositional framework?

A: The issue is that CLR cannot handle zeros. A two-pronged approach is needed.

- Solution 1: Use a Zero-Aware Correlation Metric.

- SparCC or Proportionality (ρp/ρv) are designed for compositional data and are more robust to sparsity than Pearson on CLR data.

- Solution 2: Impute zeros sensibly before CLR.

- Protocol (Bayesian Multiplicative Replacement):

- Identify all zero values in the count matrix.

- Replace zeros with an estimate proportional to the detection limit, while proportionally scaling the non-zero parts of the samples to preserve the compositional structure.

- Apply CLR transformation to the imputed data.

- Proceed with regularized correlation analysis.

- Protocol (Bayesian Multiplicative Replacement):

Data Presentation

Table 1: Comparison of Regularization Techniques for High-Dimensional Compositional Data

| Technique | Mechanism | Key Hyperparameter | Effect on Correlation Matrix | Best For |

|---|---|---|---|---|

| L2 (Ridge) | Adds constant to diagonal | λ (penalty strength) | Stabilizes inversion, shrinks coefficients uniformly | General ill-conditioned matrices, dense networks |

| L1 (Lasso) | Adds absolute value penalty | λ (penalty strength) | Forces weak coefficients to zero, induces sparsity | Sparse network recovery, feature selection |

| Graphical Lasso | L1 penalty on inverse matrix | λ (penalty strength) | Directly estimates sparse inverse covariance | Sparse partial correlation network inference |

| Thresholding | Culls edges below value | τ (cutoff value) | Removes weak edges post-hoc, simplifies network | Denoising after regularization |

Table 2: Impact of Regularization Parameter (λ) on Network Stability

| λ Value | Condition Number of C+λI | Avg. Edge Density (%) | Stability Selection Consistency (Avg. Π) |

|---|---|---|---|

| 0.001 | 1.2 x 10⁵ | 78% | 0.45 |

| 0.01 | 8.4 x 10³ | 65% | 0.62 |

| 0.1 | 9.5 x 10² | 52% | 0.81 |

| 1.0 | 1.1 x 10² | 34% | 0.85 |

Experimental Protocols

Protocol 1: Regularized Partial Correlation Network Analysis (Core Workflow)

- Input: Raw compositional count/abundance matrix

X(nsamples x mfeatures). - Preprocessing: Apply Bayesian Multiplicative Replacement to zeros. Perform CLR transformation.

- Correlation: Compute Pearson correlation matrix

Con CLR-transformed data. - Regularization: Apply Ridge:

C_ridge = C + λI. λ chosen via 5-fold cross-validation. - Inversion: Compute the inverse

P = inv(C_ridge)to obtain partial correlations. - Filtering: Apply hard threshold

τtoP, whereτis the 90th percentile of absolute values from a permuted null distribution. - Validation: Perform stability selection with 100 subsamples. Final network contains edges with

Π > 0.8.

Protocol 2: Permutation Test for Null Edge Distribution

- For

kin 1 to 1000:- Randomly permute the CLR-transformed values of each feature (column-wise) to break associations.

- Compute the correlation matrix

C_permand the regularized partial correlation matrixP_perm. - Store all off-diagonal absolute values of

P_perminto a vectorV_k.

- Pool all

V_1toV_1000values to form the null distributionD_null. - The significance threshold

τfor the real data can be set as the (1 - α) quantile ofD_null(e.g., α=0.05).

Visualizations

Title: Regularized Network Analysis for Compositional Data

Title: L2 vs L1 Regularization Effects on Network Inference

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Context | Example/Note |

|---|---|---|

| CLR Transformation | Centers log-ratio transformed data to address compositional constraint, preparing it for standard multivariate methods. | Implement via clr() function in compositions (R) or skbio.stats.composition.clr (Python). |

| Graphical Lasso Solver | Algorithm to efficiently estimate a sparse inverse covariance matrix using L1 penalty. | Use glasso package in R or sklearn.covariance.graphical_lasso in Python. |

| Stability Selection Library | Implements subsampling routines to assess the reproducibility of selected network edges. | c060 R package or custom implementation with numpy and scikit-learn. |

| Bayesian Multiplicative Replacement | Sensibly replaces zeros in compositional data without distorting relative structure. | zCompositions::cmultRepl (R) or gneiss::multiplicative_replacement (Python). |

| Proportionality Metric (ρp) | A robust association measure for compositional data, less sensitive to sparsity than correlation. | Use propr R package. Preferable to Pearson for very sparse datasets. |

| Condition Number Calculator | Diagnoses the degree of collinearity/ill-conditioning in a correlation matrix. | numpy.linalg.cond (Python) or kappa() (R). A high number (>10^9) indicates a problem. |

Optimizing Computational Workflows for Large-Scale Datasets and Reproducibility

Technical Support Center: Troubleshooting Guides & FAQs

Context: This support center addresses challenges within research on "Dealing with compositional data bias in correlation methods research," focusing on microbiome, genomics, and proteomics datasets where relative abundance data is common.

Frequently Asked Questions (FAQs)

Q1: My correlation results (e.g., Spearman, Pearson) on microbiome relative abundance data change dramatically after a simple log-transformation. Is this expected, and how should I proceed? A: Yes, this is a classic sign of compositional bias. Correlation coefficients calculated on raw relative abundances (or read counts) are not reliable due to the "closed sum" constraint. You must use compositionally aware methods. First, apply a Centered Log-Ratio (CLR) transformation using a robust estimator for the geometric mean to handle zeros. Then, use regular correlations, or proceed directly to methods like SparCC or proportionality (e.g., phi statistic).

Q2: My pipeline works on my local machine but fails on the high-performance computing (HPC) cluster with a "library not found" error. What's wrong? A: This is an environment reproducibility issue. Your local Conda or Python environment is not replicated on the HPC.

- Solution 1: Use containerization. Create a Docker or Singularity image of your entire analysis environment and run it on the cluster.

- Solution 2: Use a package manager that allows environment export. With Conda, create an

environment.ymlfile (conda env export > environment.yml) and use it to rebuild the environment on the HPC. Note: Specify channels and versions for strict reproducibility. - Solution 3: For Python-only workflows, use

pip freeze > requirements.txtand install on the cluster within a virtual environment.

Q3: I am getting memory errors when running pairwise correlation on a large feature table (e.g., 500 samples x 50,000 microbial OTUs). How can I optimize this? A: Direct computation of a 50k x 50k correlation matrix is memory-intensive (~20 GB for double precision).

- Solution 1: Use optimized, chunk-based algorithms from libraries like

dask_mlorscikit-learn'sjoblibwith parallel processing. - Solution 2: Filter features first (e.g., prevalence or variance filtering) to reduce dimensionality.

- Solution 3: If using compositionally aware methods like SparCC, leverage its internal bootstrapping and variance estimation, which is less memory-intensive than all-at-once matrix computation.

Q4: How do I properly handle zeros in my compositional dataset before applying a CLR transformation? A: Zeros are non-trivial in compositional data and can be structural (true absence) or sampling (below detection). Incorrect handling introduces bias.

- Identify: Use prevalence filtering (e.g., remove features with >80% zeros).

- Impute: For remaining zeros, use a dedicated method:

- Simple replacement: Replace with a small positive value (e.g., 0.5) or pseudo-count. Use cautiously.