Navigating the High-Dimensional Maze: Core Challenges and Modern Solutions for Microbiome Data Analysis

This article provides a comprehensive guide for researchers and drug development professionals on the pervasive challenge of high dimensionality in microbiome datasets.

Navigating the High-Dimensional Maze: Core Challenges and Modern Solutions for Microbiome Data Analysis

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the pervasive challenge of high dimensionality in microbiome datasets. We explore the foundational nature of the 'curse of dimensionality,' detailing how thousands of microbial taxa measured from relatively few samples create statistical and computational bottlenecks. The article then reviews modern methodological solutions, including advanced feature selection, regularization techniques, and dimensionality reduction. It offers practical troubleshooting advice for common pitfalls, such as sparsity and batch effects, and discusses critical validation and comparative frameworks to ensure robust, reproducible biological insights. By synthesizing current best practices, this guide aims to equip scientists with the knowledge to extract meaningful signals from complex microbial communities.

The High-Dimensional Microbiome: Understanding the Data Deluge and Its Inherent Biases

High dimensionality, denoted as p >> n, is a fundamental challenge in microbiome research. Here, p (the number of features, such as microbial taxa or genes) vastly exceeds n (the number of samples or observations). This characteristic, intrinsic to next-generation sequencing data, creates unique statistical and computational hurdles for deriving robust biological insights.

Quantitative Landscape of Microbial High Dimensionality

The scale of the p >> n problem is illustrated by comparing typical study designs.

Table 1: Dimensionality Scale in Common Microbial Profiling Studies

| Profiling Method | Typical Sample Size (n) | Typical Feature Count (p) | Ratio (p:n) | Primary Data Type |

|---|---|---|---|---|

| 16S rRNA Amplicon (V4 region) | 100 - 500 | 1,000 - 10,000 OTUs/ASVs | 10:1 to 100:1 | Count Table |

| Shotgun Metagenomics | 50 - 200 | 1 - 10 Million Genes (≈ 5,000 - 10,000 KEGG/COG pathways) | 100:1 to 20,000:1 | Read Counts / Abundance |

| Metatranscriptomics | 20 - 100 | 10,000 - 50,000 Expressed Transcripts | 500:1 to 2,500:1 | Read Counts |

Core Statistical Challenges and Methodological Approaches

The Curse of Dimensionality & Overfitting

In a high-dimensional space, samples become sparse, making it difficult to estimate parameters reliably. Models can fit noise rather than signal, leading to poor generalizability.

Protocol: Cross-Validation for High-Dimensional Models

- Objective: To obtain an unbiased estimate of model prediction error and prevent overfitting.

- Procedure:

- Data Partitioning: Randomly split the dataset into k folds (typically k=5 or 10).

- Iterative Training/Validation: For each iteration i (1 to k):

- Hold out fold i as the validation set.

- Train the model (e.g., LASSO, Random Forest) on the remaining k-1 folds.

- Apply the trained model to predict the held-out fold i and calculate the prediction error.

- Aggregation: Average the prediction errors from all k iterations to compute the cross-validation error.

- Hyperparameter Tuning: Repeat steps 2-3 for different model parameters (e.g., regularization strength λ) and select the value yielding the lowest CV error.

Regularization for Feature Selection

Regularization techniques are essential to constrain model complexity and select biologically relevant features from the high-dimensional pool.

Protocol: Sparse Regression using LASSO (Least Absolute Shrinkage and Selection Operator)

- Objective: To perform feature selection and regression simultaneously by penalizing the absolute size of coefficients.

- Procedure:

- Data Preprocessing: Center and optionally scale features. Transform microbial counts (e.g., CLR, log-transform).

- Model Specification: Define the objective function: Minimize {RSS + λ * Σ|βⱼ|}, where RSS is the residual sum of squares, βⱼ are feature coefficients, and λ is the regularization parameter.

- Path Calculation: Use coordinate descent algorithms (e.g., via

glmnetin R) to compute coefficient paths for a descending sequence of λ values. - Optimal λ Selection: Employ 10-fold cross-validation (as above) to find the λ value that minimizes prediction error (λmin) or the most parsimonious model within one standard error (λ1se).

- Feature Identification: Extract features with non-zero coefficients in the model at the chosen λ.

Table 2: Key Regularization Methods for Microbiome Data

| Method | Penalty Term | Effect on Coefficients | Use Case in Microbiome Studies |

|---|---|---|---|

| LASSO | λ * Σ |βⱼ| | Sets weak coefficients to zero; selects sparse feature set. | Identifying a minimal set of discriminatory taxa for disease prediction. |

| Ridge | λ * Σ βⱼ² | Shrinks coefficients uniformly but retains all features. | Modeling when most taxa have small, non-zero effects. |

| Elastic Net | λ₁ * Σ |βⱼ| + λ₂ * Σ βⱼ² | Compromise between LASSO and Ridge; handles correlated features. | Analyzing microbial communities where taxa are phylogenetically correlated. |

Compositionality and Sparsity

Microbiome data are compositional (sum-constrained) and contain many zeros, confounding correlation and distance measures.

Protocol: Centered Log-Ratio (CLR) Transformation

- Objective: To transform compositional data into a Euclidean space for downstream analysis while mitigating the closure problem.

- Procedure:

- Handling Zeros: Apply a pseudocount (e.g., 1) or a more sophisticated method (e.g., Bayesian multiplicative replacement) to all features.

- Geometric Mean Calculation: For each sample i, calculate the geometric mean g(xᵢ) of all p feature abundances.

- Log-Ratio Computation: Transform each feature abundance xᵢⱼ in sample i:

clr(xᵢⱼ) = log[ xᵢⱼ / g(xᵢ) ]. - Output: A n x p matrix where each sample vector is centered (sums to zero), enabling the use of standard covariance-based methods.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for High-Dimensional Microbiome Analysis

| Item / Reagent | Function / Purpose | Example in Workflow |

|---|---|---|

| DNA Extraction Kit (with bead-beating) | Ensures robust lysis of diverse bacterial cell walls for unbiased community representation. | Sample preparation prior to 16S or shotgun sequencing. |

| PCR Inhibitor Removal Reagents | Reduces artifacts in amplification, crucial for accurate initial feature generation. | Step during DNA extraction and library preparation. |

| Mock Microbial Community Standards | Provides a known mixture of genomes to assess technical variability, batch effects, and validate the p feature generation pipeline. | Quality control alongside experimental samples. |

| Indexed Adapter Primers (Dual-Indexing) | Allows multiplexing of hundreds of samples in a single sequencing run, enabling adequate sample size (n) for p>>n studies. | Library preparation for NGS. |

| Bioinformatic Pipeline (e.g., QIIME 2, DADA2) | Processes raw sequence reads into amplicon sequence variants (ASVs) or OTUs, defining the high-dimensional feature set (p). | Initial data processing from FASTQ to feature table. |

Statistical Software Package (e.g., R phyloseq, mixMC) |

Provides specialized tools for handling compositional, sparse, and high-dimensional biological data. | Downstream statistical analysis and visualization. |

Advanced Analytical Framework

A robust analysis pipeline must integrate methods to address dimensionality, compositionality, and sparsity simultaneously.

The p >> n paradigm defines the analysis of microbial profiles, demanding a specialized methodological arsenal. Success hinges on coupling rigorous experimental design—maximizing informative sample size (n)—with statistical techniques that embrace compositionality, sparsity, and high dimensionality to extract reproducible and biologically meaningful insights.

The analysis of microbiome datasets represents a paradigm of high-dimensional biological data. The core challenges stem from the immense scale and inherent interdependence of features across three primary dimensions: the vast array of microbial Taxa, the interconnected networks of Functional Pathways they encode, and the non-linear Temporal Dynamics of their interactions. This whitepaper deconstructs these three core sources of complexity, framing them within the broader thesis on overcoming the "curse of dimensionality" in microbiome research to enable robust biomarker discovery, mechanistic understanding, and therapeutic intervention.

Dimensionality of Taxa: From OTUs to Strains

The primary axis of complexity is the sheer number of potential biological entities. Moving from 16S rRNA gene amplicon sequencing to shotgun metagenomics and metatranscriptomics exponentially increases the resolution and dimensionality.

Table 1: Quantitative Scale of Taxonomic Dimensionality in Microbiome Studies

| Sequencing Method | Typical Feature Count | Resolution Level | Key Challenge |

|---|---|---|---|

| 16S rRNA Amplicon (V3-V4) | 1,000 - 10,000 OTUs/ASVs | Genus/Species | Phylogenetic inference, functional imputation |

| Shotgun Metagenomics | 1 - 10 Million Genes; 500 - 10,000 Mapped Species | Species/Strain | Assembly, binning, reference database completeness |

| Metatranscriptomics | ~5-20% of Metagenomic Feature Count | Active Community Subset | RNA stability, host RNA depletion, activity inference |

Experimental Protocol: High-Resolution Strain Tracking via Hi-C Metagenomics

Objective: To resolve strain-level heterogeneity and link phage/bacterial host interactions.

- Sample Fixation: Treat sample with 3% formaldehyde for 30min at room temperature to crosslink physically interacting DNA.

- Lysis & Chromatin Digestion: Lyse cells, digest with restriction enzyme (e.g., HindIII).

- Proximity Ligation: Dilute and perform intramolecular ligation with T4 DNA ligase to join crosslinked DNA fragments.

- Crosslink Reversal & DNA Purification: Reverse crosslinks with Proteinase K, purify DNA.

- Sequencing Library Prep: Fragment DNA, size-select (~300-700bp), prepare Illumina-compatible libraries.

- Bioinformatic Analysis: Map reads to reference genomes; pairs mapping to different genomic loci indicate physical proximity, enabling binning into single strain genomes and linking of prophages to hosts.

Complexity of Functional Pathways: From Genes to Networks

Functional redundancy and modularity across taxa add a layer of complexity orthogonal to taxonomy. The same metabolic function can be performed by different genes (isozymes) across different organisms.

Table 2: Key Functional Databases and Their Dimensionality

| Database | Core Content | Typical Pathway/Module Count | Use Case |

|---|---|---|---|

| KEGG (KO) | KEGG Orthologs, Pathways | ~500 pathways; ~10,000 KOs | Broad metabolic mapping |

| MetaCyc | Metabolic Pathways & Enzymes | ~2,800 pathways | Detailed metabolic reconstruction |

| dbCAN | Carbohydrate-Active Enzymes | ~700 enzyme families | Polysaccharide degradation analysis |

| VFDB | Virulence Factors | ~2,000 factors | Pathogenic potential assessment |

Diagram 1: Functional Pathway Inference Workflow from Metagenomic Data

Experimental Protocol: Metatranscriptomic Analysis of Pathway Activity

Objective: To quantify the expression of functional pathways in a community.

- RNA Extraction & Stabilization: Use a bead-beating kit with guanidine thiocyanate (e.g., TRIzol) to simultaneously lyse cells and inhibit RNases.

- Host RNA Depletion: Treat total RNA with a human/mouse rRNA depletion kit (e.g., NEBNext Microbiome RNA Enrichment Kit).

- mRNA Enrichment & Library Prep: Enrich prokaryotic mRNA via poly-A tail-independent methods (e.g., RiboZero rRNA depletion). Fragment RNA, synthesize cDNA, and prepare strand-specific Illumina libraries.

- Sequencing & Mapping: Perform 150bp paired-end sequencing. Map reads to a curated gene catalog (from matched metagenomes) using

Bowtie2orKallisto. - Pathway-Level Aggregation: Annotate genes with KEGG KOs using

eggNOG-mapper. Aggregate normalized transcript counts (TPM) per sample to KEGG module completeness and activity scores usingHUMAnN3.

Temporal Dynamics: Non-Linear Trajectories and Perturbations

Microbiomes are dynamic systems. Time-series data introduces autocorrelation, periodicity, and perturbation responses, requiring specialized analytical models.

Table 3: Common Temporal Patterns and Analytical Challenges

| Pattern Type | Description | Example | Analysis Method |

|---|---|---|---|

| Diurnal Rhythms | 24-hour oscillations driven by host/circadian cues | Gut microbial metabolite flux | Fourier analysis, JTK_Cycle |

| Succession | Directional change over long timescales | Infant gut maturation | Markov models, Linear mixed-effects models |

| Stability & Resilience | Resistance to and recovery from perturbation | Antibiotic response | Distance decay, State transition models |

| Cross-Domain Interaction | Coupled dynamics between microbes and host markers | Microbiome-immune cytokine interplay | Granger causality, Dynamic Bayesian Networks |

Diagram 2: State Transitions in Microbial Community Dynamics

Experimental Protocol: Longitudinal Sampling and Analysis

Objective: To model microbiome stability and response to intervention.

- Study Design: Collect baseline samples (3 timepoints over 1 week pre-intervention). Administer defined perturbation (e.g., drug, probiotic). Collect dense longitudinal samples (e.g., daily for 2 weeks, then weekly for 2 months).

- Sample Processing: Uniformly process all samples in a single, randomized batch to minimize technical variation. Use internal spike-in controls (e.g., known quantity of Salmonella bongori cells) for absolute quantification.

- Sequencing & Core Analysis: Perform shotgun metagenomic sequencing. Process via standardized pipeline (e.g., bioBakery) to generate taxonomic (MetaPhlAn) and functional (HUMAnN) profiles.

- Temporal Modeling: Calculate Bray-Curtis dissimilarity over time. Use MDS or PCA to visualize trajectories. Apply Generalized Additive Mixed Models (GAMMs) to smooth temporal trends for individual taxa/pathways. Use Linkage Disequilibrium Network Analysis to infer time-varying species associations.

The Scientist's Toolkit: Research Reagent & Material Solutions

Table 4: Essential Reagents and Materials for High-Dimensional Microbiome Research

| Item | Function/Benefit | Example Product/Category |

|---|---|---|

| Stool/DNA Stabilization Buffer | Preserves microbial composition at collection; inhibits nuclease activity. Critical for longitudinal studies. | OMNIgene•GUT, RNAlater, Zymo DNA/RNA Shield |

| Mechanical Lysis Beads | Ensures robust lysis of Gram-positive bacteria and spores for unbiased DNA/RNA extraction. | 0.1mm & 0.5mm zirconia/silica beads |

| Host Depletion Kits | Selectively removes host (human/mouse) DNA/RNA, increasing sequencing depth on microbial fraction. | NEBNext Microbiome DNA/RNA Enrichment Kits |

| Spike-in Control Standards | Allows absolute quantification and cross-sample/batch normalization. | Known quantities of synthetic cells (e.g., Salmonella bongori), synthetic DNA sequences (Sequins). |

| Phospholipid Removal Beads | Critical for metabolomic sample prep; removes interfering lipids for better MS detection of microbial metabolites. | Ostro Pass-Through Sample Preparation Plate |

| Anaerobic Chamber/Workstation | Enables cultivation and manipulation of oxygen-sensitive commensals for functional validation. | Coy Lab Products, Baker Ruskinn |

| Gnotobiotic Mouse Models | Provides a controlled, germ-free host environment for causal microbiome studies. | Taconic Biosciences, Jackson Laboratory Gnotobiotic Services |

| Microfluidic Cultivation Chips | High-throughput cultivation of uncultured microbes via single-cell encapsulation and growth. | MicrobeDial, Microbial version of Fluidigm C1 |

Thesis Context: This whitepaper examines the fundamental challenges of high dimensionality within microbiome research, where datasets comprising thousands of microbial taxa (features) per sample are common. The curse of dimensionality critically undermines statistical inference, distorts distance metrics, and leads to extreme data sparsity, directly impacting the reproducibility and translational potential of findings in therapeutic development.

Core Challenges in High-Dimensional Microbiome Data

High-dimensional microbiome data, typically generated via 16S rRNA gene amplicon or shotgun metagenomic sequencing, presents unique statistical and computational hurdles.

Statistical Power and False Discovery

As the number of features (p) far exceeds the number of samples (n), traditional statistical models fail. The probability of falsely identifying significant associations increases exponentially.

Table 1: Impact of Dimensionality on False Discovery Rate (FDR)

| Number of Hypotheses (Features Tested) | Uncorrected P-value Threshold (0.05) | Expected False Positives | Required P-value for FDR = 0.05 (Bonferroni) |

|---|---|---|---|

| 100 (Low-Dim) | 0.05 | 5 | 0.0005 |

| 1,000 (Typical Amplicon) | 0.05 | 50 | 0.00005 |

| 10,000 (Metagenomic) | 0.05 | 500 | 0.000005 |

Experimental Protocol for FDR Control (q-value calculation):

- Input: Obtain a list of p-values from testing all m features (e.g., taxa) for association with a phenotype.

- Ordering: Sort the p-values in increasing order: ( p{(1)} \leq p{(2)} \leq ... \leq p_{(m)} ).

- Estimate π₀: Estimate the proportion of true null hypotheses (( \pi_0 )) using a bootstrap or smoothing method on the p-value histogram.

- Calculate q-values: For each ordered p-value, compute the q-value as: ( q{(i)} = \min{t \geq p{(i)}} \frac{ \hat{\pi}0 \cdot m \cdot t }{ #{ p_j \leq t } } )

- Output: Assign each feature its q-value, representing the minimum FDR at which the feature is deemed significant.

Distortion of Distance Metrics

Distance metrics (e.g., for beta-diversity) used in clustering and ordination behave counter-intuitively in high dimensions. Points become equidistant, and the concept of "nearest neighbor" vanishes.

Table 2: Behavior of Common Distance Metrics Under High Dimensionality

| Metric | Formula | High-Dim Behavior in Microbiome Context |

|---|---|---|

| Euclidean | ( \sqrt{\sum{i=1}^p (xi - y_i)^2} ) | Distances converge; loses discriminative power for clustering samples. |

| Bray-Curtis | ( \frac{\sumi |xi - yi|}{\sumi (xi + yi)} ) | More robust but still suffers from sparsity-induced inflation. |

| Jaccard (Binary) | ( 1 - \frac{|x \cap y|}{|x \cup y|} ) | Becomes dominated by double zeros (joint absences), which may be biologically uninformative. |

| UniFrac (Phylogenetic) | ( \frac{\sumi bi |xi - yi|}{\sumi bi} ) | Weighted version is more stable; unweighted version is highly sensitive to sparsity. |

Experimental Protocol for Assessing Distance Metric Distortion:

- Data Simulation: Simulate a microbiome count matrix with n samples and p taxa, where p >> n. Introduce a known group structure (e.g., 2 clusters).

- Distance Calculation: Compute a pairwise distance matrix between all samples using the metric under investigation.

- Dimensionality Increase: Incrementally increase p by adding low-abundance/noise taxa.

- Analysis: For each dimensionality level:

- Calculate the ratio of between-cluster to within-cluster distances.

- Perform a PERMANOVA test to check for significant separation between known clusters.

- Output: Plot the distance ratio and PERMANOVA R²/p-value against the number of dimensions. A decline indicates distortion.

Data Sparsity

Microbiome data is intrinsically sparse (many zero counts), due to biological rarity and technical limits. In high dimensions, sparsity increases, violating assumptions of many statistical models.

Table 3: Sparsity Metrics in Public Microbiome Datasets

| Dataset (Study) | Sample Size (n) | Feature Count (p) | % Zero Entries | Sequencing Depth (Mean Reads/Sample) |

|---|---|---|---|---|

| American Gut Project | >10,000 | ~50,000 (OTUs) | ~97% | Variable (5,000-50,000) |

| Human Microbiome Project (HMP) | 300 | ~5,000 (Species) | ~90% | ~10 Million (WGS) |

| IBD Multi'omics | 130 | ~12,000 (Microbial Genes) | ~85% | ~50 Million (Metagenomic) |

Experimental Protocol for Sparsity-Aware Analysis (Zero-Inflated Gaussian Model):

- Model Specification: For a taxon j across samples i, define a mixed discrete-continuous model:

- Latent variable ( Z{ij} \sim \text{Bernoulli}(p{ij}) ) indicates presence/absence.

- ( Y{ij} \| (Z{ij}=1) \sim N(\mu_{ij}, \sigma^2) ) models non-zero abundance.

- ( Y{ij} \| (Z{ij}=0) = 0 ).

- Link Functions: ( \text{logit}(p{ij}) = Xi^T \alphaj ) and ( \mu{ij} = Xi^T \betaj ), where ( X_i ) are covariates.

- Estimation: Use maximum likelihood estimation via the EM algorithm.

- Inference: Test hypotheses on ( \alphaj ) (presence probability) and ( \betaj ) (conditional abundance) separately.

Visualizing High-Dimensional Relationships

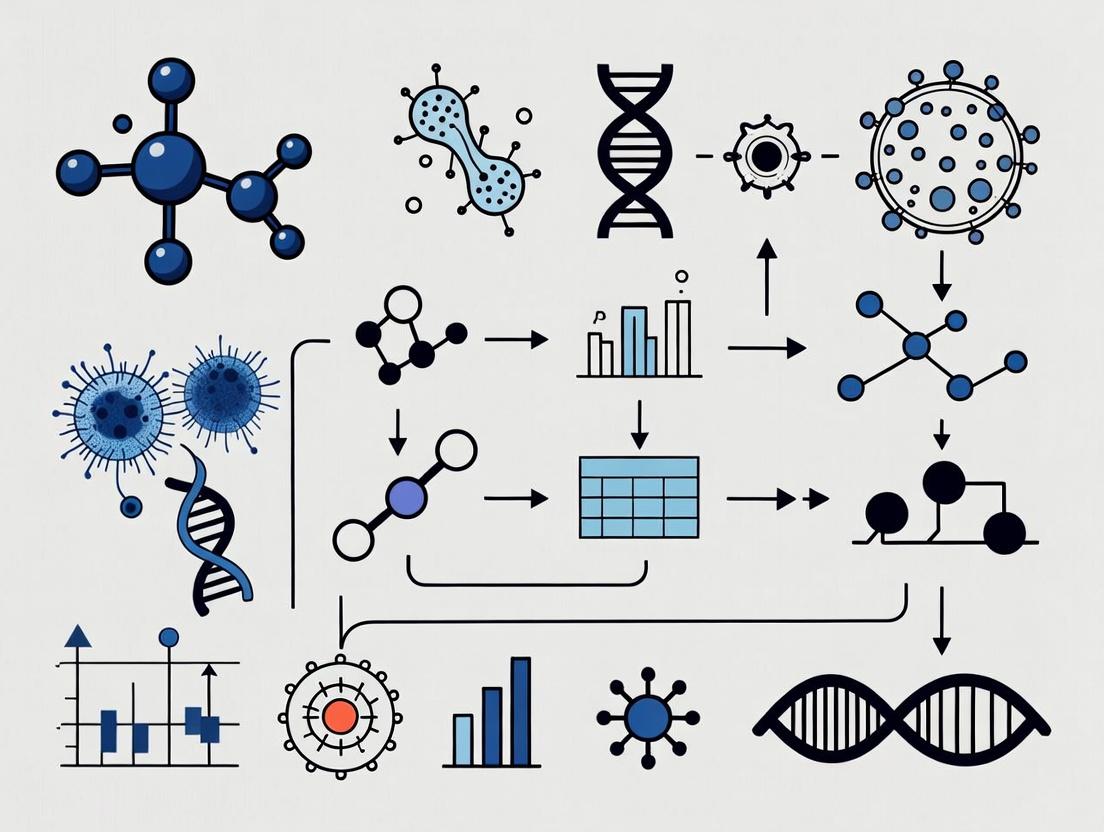

High-Dim Impact & Mitigation Path

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents & Computational Tools for High-Dimensional Analysis

| Item Name | Vendor/Platform | Function in Context |

|---|---|---|

| ZymoBIOMICS Spike-in Control | Zymo Research | Provides known microbial cells/DNA for normalization, addressing compositionality and sparsity in library prep. |

| DADA2 or Deblur Pipeline (Open Source) | GitHub/Bioconda | Amplicon sequence variant (ASV) inference, critical for precise, high-resolution feature definition. |

| Phyloseq R Package | Bioconductor | Centralized object for OTU/ASV table, taxonomy, sample data, and phylogeny; enables integrated sparse data analysis. |

| QIIME 2 Platform | qiime2.org | End-to-end workflow manager for calculating robust beta-diversity distances (e.g., Faith's PD, UniFrac). |

| MaAsLin 2 (Microbiome Multivariable Associations) | Huttenhower Lab | Performs fixed-effects linear models with FDR correction, designed for high-dimensional, sparse metadata. |

| MetagenomeSeq R Package | Bioconductor | Implements CSS normalization and zero-inflated Gaussian models explicitly for sparse sequencing data. |

| SciKit-learn (Python) | scikit-learn.org | Provides PCA, sparse PCA, and regularization algorithms (Lasso) for dimensionality reduction and feature selection. |

| MMUPHin R Package | Bioconductor | Enables meta-analysis of high-dimensional microbiome studies with batch effect correction. |

Advanced Methodological Workflow

Sparse Microbiome Data Analysis Flow

Key Recommendations for Practitioners

Table 5: Decision Matrix for Addressing High-Dimensional Challenges

| Primary Challenge | Recommended Approach | Rationale | Software/Tool |

|---|---|---|---|

| False Discovery | Employ Storey's q-value or Benjamini-Hochberg FDR after robust filtering. | More powerful than family-wise error rate (FWER) for large p. | qvalue R package, statsmodels (Python). |

| Distance Distortion | Use phylogeny-aware, abundance-weighted metrics. | Incorporates biological structure and dampens sparsity impact. | Weighted UniFrac in QIIME 2, Phyloseq. |

| Extreme Sparsity | Apply Compositional Data Analysis (CoDA) principles with careful zero-handling. | Data is relative; simple imputation is invalid. | ALDEx2 (CLR), zCompositions (CZM). |

| p >> n Modeling | Utilize regularized regression (Lasso, Elastic Net). | Performs automatic feature selection to improve generalizability. | glmnet R package, SciKit-learn. |

| Batch Effects | Integrate batch correction as a covariate or use meta-analysis tools. | High-dimensional data is prone to technical confounding. | MMUPHin, Harmony (for embeddings). |

1. Introduction within the Thesis Context The analysis of microbiome datasets is a quintessential high-dimensionality problem, where the number of features (taxa, genes) far exceeds the number of samples. Within this framework, data integrity is paramount. Systematic biases introduced during sample processing and sequencing confound true biological signal, leading to spurious correlations and invalid inferences. This technical guide details prevalent biases from wet-lab to computational analysis, providing methodologies for their identification and mitigation, which is foundational for robust research and translation in therapeutics.

2. Sequencing Artifacts: Sources and Protocols for Detection

2.1. PCR Amplification Biases

- Source: Differential amplification efficiency due to primer-template mismatches and GC content variation.

- Detection Protocol (qPCR Calibration):

- Standard Preparation: Create a mock microbial community with known, equimolar genomic DNA from 10-20 diverse bacterial strains.

- PCR Amplification: Amplify the mock community using the standard 16S rRNA gene primers (e.g., V4 region, 515F/806R) and cycling conditions used in your study.

- qPCR Assay: For each strain in the mock community, perform a separate, strain-specific qPCR assay on both the pre-amplification genomic DNA mix and the post-amplification product. Use single-copy gene targets.

- Bias Quantification: Calculate the amplification bias factor for strain i as:

Bias_i = (Copies_post-PCR_i / Copies_pre-PCR_i) / (Mean of all ratios). Deviations from 1 indicate bias.

2.2. Batch Effects and Contamination

- Source: Technical variation introduced by different reagent lots, personnel, or sequencing runs, alongside kitome and laboratory contaminants.

- Detection Protocol (Negative Control Analysis):

- Sample Processing: Include multiple negative control samples (e.g., blank extraction buffers, sterile water) in every processing batch.

- Sequencing & Bioinformatic Processing: Sequence controls alongside samples and process through the same pipeline (e.g., DADA2, Deblur).

- Contaminant Identification: Apply statistical models (e.g., R package

decontamusing the prevalence or frequency method) to identify taxa significantly more abundant in controls than in true samples. - Batch Effect Statistical Test: Perform Principal Coordinate Analysis (PCoA) on between-sample distances. Use PERMANOVA to test if "Batch" is a significant predictor of variation, with a low p-value (<0.05) indicating a strong batch effect.

Diagram 1: Data Generation Pipeline and Major Bias Sources (Width: 750px)

Table 1: Quantitative Impact of Common Sequencing Artifacts

| Bias Type | Typical Magnitude of Effect | Primary Detection Method | Common Mitigation Strategy |

|---|---|---|---|

| PCR Amplification Bias | 10-1000x variation in per-taxon efficiency | qPCR on mock communities | Use of reduced-cycle PCR, replicate reactions |

| Index Hopping | 0.1-10% of reads per sample (dual-indexed) | Analysis of unique sample-pair controls | Use of unique dual indexing (UDI) |

| Extraction Kit Contaminants | Can constitute >80% of reads in low-biomass samples | Analysis of negative controls | Computational removal (e.g., decontam), background subtraction |

| Batch Effects | Can explain 20-50% of total variance in PCoA | PERMANOVA on batch labels | Batch correction (e.g., ComBat-seq), randomized block design |

3. Compositional Effects: The Core Mathematical Challenge

Microbiome sequencing data is compositional; counts are constrained to a fixed sum (library size). This induces a negative correlation between features, where an increase in one taxon's relative abundance necessitates an apparent decrease in others.

3.1. Understanding the Spurious Correlation

- Protocol for Simulating Compositional Bias:

- Generate a simple, uncorrelated ground truth matrix of 3 taxa (A, B, C) across 100 samples. Let their absolute abundances be independent log-normal variables.

- Compute the relative abundance (composition) for each sample:

A_rel = A / (A+B+C). - Calculate the correlation (e.g., Spearman) between the absolute abundances of A and B. This reflects the true, biological correlation (~0).

- Calculate the correlation between the relative abundances (

A_relandB_rel). Observe the induced negative correlation, which is purely an artifact of the compositional constraint.

3.2. Experimental Design & Analysis Solutions

- Incorporating Internal Standards: Add known quantities of synthetic spike-in cells or DNA (e.g., from organisms absent in the sample) prior to DNA extraction.

- Protocol for Spike-in Normalization:

- Spike-in Selection: Choose 2-3 non-bacterial spikes (e.g., bacteriophage, synthetic genes) at varying, known concentrations.

- Sample Processing: Spike a consistent volume of spike-in mix into each sample before extraction.

- Sequencing & Quantification: Quantify spike-in read counts post-sequencing.

- Biomass Estimation: Model the relationship between expected spike-in molecules and observed reads. Use this model to estimate the absolute microbial load in each sample.

Diagram 2: Spurious Correlation Induced by Compositionality (Width: 750px)

Table 2: Methods for Addressing Compositional Data

| Method Category | Specific Technique/Tool | Underlying Principle | Key Limitation |

|---|---|---|---|

| Log-Ratio Transformations | ALDEx2 (CLR), propr (ALR) |

Converts to Euclidean space using log of ratios to a reference. | Choice of denominator (ALR) or geometric mean (CLR) is critical. |

| Probabilistic Modeling | ANCOM-BC, DESeq2 (with care) |

Models observed counts while accounting for sampling fraction. | Assumptions about distribution (e.g., negative binomial) may not hold. |

| Incorporating Spike-ins | martian, damage |

Uses external controls to estimate absolute biomass. | Added cost; requires careful optimization of spike-in levels. |

| Differential Ranking | ANCOM (W-statistic) |

Identifies differentially abundant taxa by testing all log-ratios. | Conservative; yields rank of confidence, not effect size. |

4. The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Role in Bias Mitigation |

|---|---|

| Mock Microbial Community (e.g., ZymoBIOMICS) | Contains defined genomic DNA from known bacteria/fungi. Used as a process control to quantify amplification bias, extraction efficiency, and bioinformatic pipeline accuracy. |

| External RNA Controls Consortium (ERCC) Spike-in Mix | Synthetic, non-polyadenylated RNA transcripts at known concentrations. Spiked into samples before RNA extraction to normalize for technical variation in metatranscriptomic studies and estimate absolute transcript levels. |

| Unique Dual Index (UDI) Kits (e.g., Illumina IDT) | Indexing primers containing unique dual barcode combinations for each sample. Dramatically reduces index hopping artifacts compared to single or combinatorial indexing. |

| Phage Lambda or Pseudomonas Phage DNA | Non-bacterial DNA used as a spike-in control added prior to DNA extraction. Helps monitor extraction efficiency and can aid in estimating absolute microbial load when used with a standard curve. |

| Inhibitor Removal Reagents (e.g., PVPP, BSA) | Added during DNA extraction to bind and remove humic acids, polyphenols, and other environmental inhibitors that cause PCR bias and reduced sequencing depth. |

| Reduced-Cycle PCR Master Mixes | Specialized polymerase mixes optimized for lower PCR cycle numbers (e.g., 25-30 cycles) to minimize chimera formation and reduce amplification bias while maintaining library yield. |

In microbiome research, high-throughput sequencing (e.g., 16S rRNA amplicon or shotgun metagenomics) generates vast, high-dimensional datasets characterized by extreme sparsity. A majority of entries are zeros, presenting a fundamental analytical challenge: determining whether a zero represents the true biological absence of a microbial taxon (a "true zero") or a failure to detect a present taxon due to technical limitations (a "technical absence" or false zero). This distinction is critical for accurate ecological inference, differential abundance testing, and network analysis, which underpin discoveries in dysbiosis, biomarker identification, and therapeutic development.

Technical zeros arise from multiple stages of the experimental workflow, conflating with genuine biological absences.

Table 1: Primary Sources of Technical Zeros in Microbiome Sequencing

| Source | Stage | Mechanism | Consequence |

|---|---|---|---|

| Low Biomass | Sample Collection | Insufficient starting microbial material. | Stochastic sampling depth; dominance of kit contaminants. |

| Library Preparation | PCR Amplification | Primer bias; stochastic PCR dropout for low-abundance targets. | Non-detection of taxa with mismatched primers or low initial template. |

| Sequencing Depth | Sequencing | Inadequate read coverage for rare community members. | Failure to sample rare taxa (rarefaction effect). |

| Bioinformatic Filtering | Data Processing | Aggressive abundance or prevalence thresholds. | Removal of low-count OTUs/ASVs, inflating sparsity. |

Methodological Framework for Disambiguation

A multi-faceted approach is required to distinguish zero types. The following experimental and computational protocols are essential.

Experimental Controls and Protocols

Protocol 1: Serial Dilution & Spike-in Controls

- Objective: Quantify limit of detection (LOD) and PCR stochasticity.

- Materials: Synthetic microbial community standards (e.g., ZymoBIOMICS Microbial Community Standard).

- Procedure:

- Create a serial dilution series of the standard community across a range relevant to typical sample biomass.

- Spike each dilution into a constant mass of sterile matrix (e.g., buffer or sterile stool) alongside process control samples.

- Extract DNA, prepare libraries, and sequence in the same batch as experimental samples.

- Plot observed abundance vs. expected abundance for each taxon across dilutions. The dilution point at which a taxon consistently drops to zero defines its experimental LOD.

Protocol 2: Technical Replication & Dilution-to-Extinction

- Objective: Assess variance attributable to technical noise.

- Procedure:

- For a subset of samples, perform multiple (n≥3) independent DNA extractions from the same homogenate.

- From each extraction, create multiple library prep replicates.

- Sequence all replicates. Use statistical models (e.g., beta-binomial) to partition variance and identify zeros likely due to technical dropout.

Computational & Statistical Modeling Approaches

Method: Bayesian Probability Modeling for Zero Inflation

Models like Zero-Inflated Gaussian (ZIG) or Zero-Inflated Negative Binomial (ZINB) treat observed counts as arising from a mixture of two processes: a point mass at zero (representing technical absence) and a count distribution (representing true abundance, which may also generate zeros). Implementation is available in tools like metagenomeSeq.

Method: Covariate Modeling of Detection Probability Include sample-specific covariates (e.g., sequencing depth, DNA concentration, batch) in a hierarchical model to explicitly estimate the probability of detection failure. This informs whether a zero is conditionally likely to be technical.

Table 2: Empirical Estimates of Technical Zero Rates in Microbiome Studies

| Study (Year) | Sequencing Platform | Sample Type | Median Sequencing Depth | Estimated % of Zeros Attributable to Technical Dropout* | Key Method for Estimation |

|---|---|---|---|---|---|

| Silverman et al. (2021) mSystems | Illumina MiSeq | Low-biomass lung aspirates | 40,000 reads | 35-60% | Spike-in controls & dilution series. |

| McLaren et al. (2019) PLoS Comput Biol | Illumina HiSeq | Simulated gut communities | 100,000 reads | 15-40% | Modeling based on uneven library sizes. |

| Kaul et al. (2017) Biometrics | 454 Pyrosequencing | Marine sediments | 10,000 reads | 25-55% | Zero-inflated logistic regression on covariates. |

| Synthetic Benchmark Study | Illumina NovaSeq | ZymoBIOMICS Gut Standard | 1,000,000 reads | 10-25% | Deviation from known ground-truth composition. |

*Estimates vary based on biomass, community evenness, and sequencing depth.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Addressing the Sparsity Problem

| Item | Function | Example Product |

|---|---|---|

| Mock Microbial Community | Provides known composition ground truth for quantifying technical loss and bias. | ZymoBIOMICS Microbial Community Standard (even/uneven). |

| External Spike-in Controls | Distinguishes true absences from extraction/PCR failures. | SynDNA from seqWell (non-biological synthetic DNA sequences). |

| Carrier DNA | Improves library yield from low-biomass samples, reducing stochastic dropout. | UltraPure Salmon Sperm DNA Solution (Thermo Fisher). |

| Inhibition-Removal Kits | Reduces PCR inhibition, a source of false zeros. | OneStep PCR Inhibitor Removal Kit (Zymo Research). |

| High-Fidelity Polymerase | Minimizes PCR bias against specific templates. | KAPA HiFi HotStart ReadyMix (Roche). |

| Duplicate Library Prep Kits | Allows kit bias assessment for critical samples. | DNeasy PowerSoil Pro (QIAGEN) vs. MagAttract PowerSoil DNA Kit (QIAGEN). |

Visualizing the Workflow and Statistical Model

Title: Disambiguation Workflow for Microbiome Zeros

Title: Structure of a Zero-Inflated Count Model

Taming the Dimensional Beast: Advanced Statistical and Machine Learning Strategies

Within microbiome research, high-dimensionality presents a formidable challenge. Sequencing technologies yield datasets with thousands of operational taxonomic units (OTUs), amplicon sequence variants (ASVs), or microbial gene functions per sample, where the number of features far exceeds the number of samples. This "curse of dimensionality" obscures biological signal, increases noise, and risks model overfitting. Dimensionality reduction techniques are therefore indispensable workhorses, transforming sparse, high-dimensional data into lower-dimensional representations suitable for visualization, hypothesis generation, and downstream statistical analysis. This guide provides an in-depth technical examination of four core methods—PCA, PCoA, UMAP, and t-SNE—framed explicitly within the context of microbiome data analytics.

Core Techniques: Mechanisms and Applications

Principal Component Analysis (PCA)

Mechanism: PCA is a linear, unsupervised method that identifies orthogonal axes (principal components) of maximum variance in the data. It performs an eigendecomposition of the covariance matrix (or singular value decomposition on centered data) to project data onto a new subspace defined by the eigenvectors. The first PC captures the greatest variance, the second the next greatest, and so on. Microbiome Context: PCA is best applied to transformed (e.g., centered log-ratio [CLR] transformation) compositional microbiome data to mitigate sparsity and compositionality issues. It is a staple for initial exploration of beta diversity when using Euclidean distance.

Principal Coordinates Analysis (PCoA / Metric Multidimensional Scaling)

Mechanism: PCoA is a distance-based method. Given a pairwise distance matrix (e.g., Bray-Curtis, UniFrac), it finds a low-dimensional embedding where the Euclidean distances between points approximate the original dissimilarities. This is achieved by performing eigendecomposition on a double-centered distance matrix. Microbiome Context: PCoA is the visualization cornerstone for ecological distance metrics. It is the standard for visualizing between-sample differences (beta diversity) using phylogenetically aware (UniFrac) or abundance-sensitive (Bray-Curtis) distances.

t-Distributed Stochastic Neighbor Embedding (t-SNE)

Mechanism: t-SNE is a non-linear, probabilistic method. It first computes probabilities that reflect pairwise similarities in high-dimensional space (using a Gaussian kernel). It then defines a similar probability distribution in low dimensions (using a Student’s t-distribution) and minimizes the Kullback–Leibler divergence between the two distributions via gradient descent. Microbiome Context: t-SNE excels at revealing local cluster structures (e.g., distinct enterotypes or treatment groups). However, it is computationally intensive, stochastic (requires multiple runs), and inter-cluster distances are not interpretable. Best used after initial PCA/PCoA for fine-grained cluster visualization.

Uniform Manifold Approximation and Projection (UMAP)

Mechanism: UMAP is a non-linear, graph-based technique grounded in topological data analysis. It constructs a high-dimensional weighted graph representing the data's manifold, computes a low-dimensional analogous graph, and optimizes the layout to preserve the topological structure. It uses a cross-entropy loss function for optimization. Microbiome Context: UMAP often provides a faster, more scalable alternative to t-SNE, with better preservation of global structure. It is increasingly used for visualizing complex microbiome landscapes, integrating with single-cell microbiome data, and as a preprocessing step for clustering.

Quantitative Comparison of Methods

Table 1: Technical Specifications and Performance Metrics

| Feature | PCA | PCoA | t-SNE | UMAP |

|---|---|---|---|---|

| Linearity | Linear | Linear (on distance matrix) | Non-linear | Non-linear |

| Distance Metric | Euclidean | Any (Bray-Curtis, UniFrac, etc.) | Euclidean (typically) | Any custom metric |

| Data Type | Raw/Transformed Abundance | Distance Matrix | Raw/Transformed Abundance | Raw/Transformed Abundance |

| Global Structure | Preserved Exactly | Preserved (as per input distances) | Not Preserved | Better Preserved than t-SNE |

| Scalability | Excellent (O(n³) worst-case) | Good (O(n³) on distance matrix) | Poor (O(n²)) | Good (O(n¹.⁴⁴)) |

| Deterministic | Yes | Yes | No (random init) | Largely Yes (with seed) |

| Key Hyperparameter | Number of Components | Number of Components/Distance Metric | Perplexity, Learning Rate | nneighbors, mindist |

| Typical Microbiome Use | CLR-transformed data exploration | Beta-diversity visualization | Fine-grained cluster inspection | Large dataset visualization, clustering prep |

Table 2: Recommended Application in Microbiome Analysis Pipeline

| Research Objective | Recommended Method(s) | Rationale |

|---|---|---|

| Initial Exploratory Data Analysis | PCA (on CLR data) | Fast, deterministic, reveals major gradients. |

| Beta Diversity Visualization | PCoA (with Bray-Curtis/UniFrac) | Standard, interpretable, directly uses ecological distances. |

| Identifying Dense Sub-clusters | t-SNE | Superior local structure preservation; reveals tight groupings. |

| Analyzing Large Cohort Datasets (>10k samples) | UMAP | Scalable, balances local/global structure. |

| Integrating with Other 'Omics | UMAP, PCA (for integration) | UMAP handles heterogeneity; PCA for linear factor integration. |

Experimental Protocol: A Standard Microbiome Dimensionality Reduction Workflow

Protocol Title: Comprehensive Dimensionality Reduction Analysis for 16S rRNA Amplicon Data.

1. Preprocessing & Normalization:

- Input: ASV/OTU table (counts), phylogenetic tree (for UniFrac).

- Rarefaction: Optional, controversial. If applied, rarefy to even depth across samples.

- Transformation: Apply a variance-stabilizing transformation.

- For PCA: Use Centered Log-Ratio (CLR) transformation. Add a pseudocount if necessary.

- For PCoA: No transformation on table; distance metric choice handles compositionality.

- For t-SNE/UMAP: Use CLR or relative abundance (%) transformation.

2. Distance/Dissimilarity Calculation (for PCoA):

- Calculate a sample-wise distance matrix. Common choices:

- Bray-Curtis: Abundance-based, robust.

- Weighted UniFrac: Phylogenetic & abundance-aware.

- Unweighted UniFrac: Phylogenetic, presence/absence focused.

3. Dimensionality Reduction Execution:

- PCA: Perform SVD on the CLR-transformed matrix. Extract eigenvalues to assess variance explained per PC.

- PCoA: Perform eigendecomposition on the double-centered D² matrix (Gower's method).

- t-SNE:

- Set perplexity (typically 5-50). For microbiome, start with

perplexity = min(30, (n_samples - 1)/3). - Set learning rate (typically 10-1000). Use default (200) initially.

- Run multiple iterations (e.g., 1000) with different random seeds to assess stability.

- Set perplexity (typically 5-50). For microbiome, start with

- UMAP:

- Set

n_neighbors(typically 5-50). Balances local/global structure. Lower values emphasize local clusters. - Set

min_dist(typically 0.01-0.5). Controls cluster tightness. Lower values allow denser packing. - Use a reproducible random seed.

- Set

4. Validation & Interpretation:

- Assess consistency with biological covariates (e.g., PERMANOVA on PCoA coordinates).

- For t-SNE/UMAP, run multiple times to ensure qualitative stability of clusters.

- Map feature loadings (PCA) or biplot vectors (PCoA with correlations) to interpret driving taxa.

Visualizing the Analytical Workflow

Title: Microbiome Dimensionality Reduction Analysis Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Software Packages & Analytical Resources

| Item (Package/Platform) | Function in Dimensionality Reduction Analysis |

|---|---|

| QIIME 2 (Core) | End-to-end platform for calculating distance matrices (e.g., DEICODE for robust Aitchison PCA, phylogenetic distances) and performing PCoA. |

| R (stats, vegan) | The prcomp() function for PCA; cmdscale() and vegan::wcmdscale() for PCoA; vegan::vegdist() for distance matrix calculation. |

| R (phyloseq) | Integrative package for handling microbiome data; wrapper for ordination methods and visualization. |

| R (Rtsne, umap) | Dedicated packages for running t-SNE (Rtsne) and UMAP (umap, uwot) algorithms on abundance data. |

| Python (scikit-bio) | Provides skbio.stats.ordination.pcoa and skbio.stats.distance for robust PCoA and distance calculations. |

| Python (scikit-learn) | Offers PCA, TSNE, and supporting preprocessing modules (e.g., StandardScaler). |

| Python (scanpy) | Single-cell analysis toolkit with highly optimized implementations of PCA, UMAP, and visualization, applicable to microbiome ASV tables. |

| DEICODE (QIIME 2 plugin) | Specifically performs robust Aitchison PCA (a form of RPCA) on sparse, compositional microbiome data, addressing zeros effectively. |

| GUniFrac R Package | Computes generalized UniFrac distances, a flexible metric for PCoA input. |

| MicrobiomeAnalyst | Web-based platform with point-and-click interfaces for performing PCA, PCoA, and t-SNE. |

Selecting the appropriate dimensionality reduction workhorse is critical for illuminating patterns within high-dimensional microbiome datasets. PCA provides a linear, interpretable baseline. PCoA remains the gold standard for visualizing ecological distances. t-SNE and UMAP offer powerful non-linear alternatives for discerning complex cluster topologies. The choice hinges on the specific biological question, data characteristics, and analytical goals. Employing these methods in a complementary, hypothesis-driven manner—grounded in solid preprocessing and rigorous statistical validation—is paramount for advancing research in microbiome science and its translation into therapeutic development.

The analysis of microbiome datasets, typically generated via high-throughput 16S rRNA gene sequencing or shotgun metagenomics, is fundamentally challenged by high dimensionality. Data often comprise thousands of operational taxonomic units (OTUs), amplified sequence variants (ASVs), or functional pathways across a relatively small number of biological samples (n << p problem). This scale exacerbates risks of overfitting, spurious correlations, and computational inefficiency. Effective feature selection—the process of identifying a subset of relevant, discriminatory microbial taxa or genes—is therefore critical for building robust predictive models, generating interpretable hypotheses, and discovering validated biomarkers for health, disease, and therapeutic response.

Core Feature Selection Methodologies: A Technical Guide

Feature selection methods are broadly categorized into Filter, Wrapper, and Embedded approaches. Each presents distinct trade-offs between computational cost, model dependency, and risk of overfitting.

Table 1: Comparison of Core Feature Selection Methodologies

| Method Category | Key Algorithms/Techniques | Mechanism | Advantages | Disadvantages | Best For |

|---|---|---|---|---|---|

| Filter Methods | Wilcoxon rank-sum, Kruskal-Wallis, DESeq2 (for counts), ANCOM-BC, LefSe | Ranks features by univariate statistical association with outcome, independent of classifier. | Fast, scalable, model-agnostic, reduces overfitting risk. | Ignores feature interactions, may select redundant features. | Initial screening, large-scale datasets (>10k features). |

| Wrapper Methods | Recursive Feature Elimination (RFE), Sequential Forward/Backward Selection | Uses predictive model performance to guide subset search. | Considers feature interactions, often finds high-performing subsets. | Computationally intensive, high risk of overfitting to small samples. | Moderate-sized datasets where model performance is paramount. |

| Embedded Methods | LASSO, Elastic Net, Random Forest (Gini importance), Boruta | Feature selection is built into the model training process. | Balances performance and efficiency, models interactions. | Model-specific, may be complex to tune. | Most general-purpose predictive modeling tasks. |

| Stability Selection | Combined with LASSO or RF, repeated subsampling | Identifies features consistently selected across multiple subsamples. | Reduces false positives, robust to noise. | Computationally heavy, requires careful parameterization. | High-confidence biomarker discovery. |

Detailed Experimental Protocol: A Standardized Workflow for Biomarker Identification

Protocol: Integrated Filter-Embedded Pipeline for Case-Control Microbiome Studies

Objective: To identify a stable, discriminatory set of microbial taxa differentiating two clinical cohorts (e.g., Healthy vs. Disease).

Input: Normalized OTU/ASV table (e.g., from QIIME 2 or mothur), sample metadata with group labels.

Step 1: Preprocessing & Filtering.

- Low-Prevalence Filtering: Remove taxa present in less than 10% of samples in either group.

- Variance Stabilizing Transformation: Apply a transformation like center log-ratio (CLR) for compositional data or use tools like

DESeq2for raw count data.

Step 2: Initial Filter-Based Screening.

- Apply a non-parametric test (Wilcoxon rank-sum for two groups, Kruskal-Wallis for >2) or a compositional-aware tool like

ANCOM-BC. - Retain features with an adjusted p-value (FDR) < 0.05 and a minimum effect size (e.g., log2 fold-change > |1|).

Step 3: Embedded Selection with Regularization.

- Using the filtered feature set, fit a LASSO-regularized logistic regression model.

- Procedure:

- Split data into training (70%) and hold-out test (30%) sets, stratifying by group label.

- On the training set, perform 10-fold cross-validation to identify the optimal regularization parameter (λ) that minimizes binomial deviance.

- Extract the non-zero coefficient features from the model trained at the optimal λ.

- Alternative: Use a Random Forest classifier and select features above a mean decrease in Gini importance threshold.

Step 4: Stability Validation.

- Repeat Step 3 (embedded selection) on 100 bootstrapped resamples of the training data.

- Calculate the selection frequency for each feature.

- Define the final biomarker set as features selected in >80% of bootstraps.

Step 5: Performance Assessment.

- Train a final, non-regularized model (e.g., logistic regression) on the full training set using only the stable biomarker features.

- Evaluate its classification performance (AUC-ROC, sensitivity, specificity) on the held-out test set.

- Note: Performance on the test set provides an unbiased estimate of the biomarker panel's predictive power.

Diagram Title: Feature Selection & Biomarker Validation Workflow

Advanced Considerations & Current Tools

Compositionality: Microbiome data are compositional (sum-constrained). Methods like ANCOM (Analysis of Composition of Microbiomes), ALDEx2, and Songbird are explicitly designed for this property, making them superior to standard statistical tests for differential abundance.

Longitudinal Data: For time-series data, feature selection must account for within-subject correlation. Tools like MMUPHin (for meta-analysis) and ZINQ (Zero-Inflated Negative Binomial Mixed Models) enable covariate-adjusted, longitudinal differential abundance analysis.

Integration with Multi-omics: Identifying biomarkers across data layers (e.g., taxa, metabolites, host transcripts) requires integrative methods like DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents) or sPLS-DA (sparse Partial Least Squares Discriminant Analysis).

Diagram Title: Logical Framework for Biomarker Discovery

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Reagents for Feature Selection Experiments

| Item / Solution | Function / Purpose | Example Product/Software |

|---|---|---|

| DNA Extraction Kit (Stool) | Standardized microbial genomic DNA isolation for sequencing. | Qiagen DNeasy PowerSoil Pro Kit, MO BIO PowerLyzer PowerSoil Kit. |

| 16S rRNA Gene PCR Primers | Amplify hypervariable regions for taxonomic profiling. | 515F/806R (V4), 27F/338R (V1-V2). |

| Quantitative PCR (qPCR) Master Mix | Absolute quantification of specific bacterial taxa post-discovery. | SYBR Green or TaqMan-based assays. |

| Bioinformatics Pipeline | Process raw sequences to feature table (OTUs/ASVs). | QIIME 2, mothur, DADA2. |

| Statistical Programming Environment | Implement feature selection algorithms and analysis. | R (phyloseq, microbiome, caret, glmnet), Python (scikit-learn, SciPy). |

| Compositional Data Analysis Tool | Differential abundance testing accounting for compositionality. | ANCOM-BC R package, Aldex2, Songbird. |

| Stability Selection Package | Implement robust selection via subsampling. | stabs R package, scikit-learn RecursiveFeatureEliminationCV. |

| Data Visualization Library | Visualize results (volcano plots, ROC curves, cladograms). | ggplot2 (R), matplotlib/seaborn (Python), Graphviz. |

High-dimensional microbiome datasets, characterized by a vast number of operational taxonomic units (OTUs) or amplicon sequence variants (ASVs) (p) relative to a small sample size (n), present significant challenges for predictive modeling. This "curse of dimensionality" leads to model overfitting, poor generalization, and difficulty in identifying truly predictive microbial features. Regularization techniques—LASSO, Ridge, and Elastic Net—are essential for constructing robust, interpretable, and generalizable models from such data, enabling advancements in research linking the microbiome to health, disease, and therapeutic response.

Core Regularization Methods: Theory and Application

Regularization modifies the loss function to penalize model complexity by shrinking the magnitude of regression coefficients.

Objective Function (General Form):

Minimize: Loss(y, ŷ) + λ * Penalty(β)

Ridge Regression (L2 Regularization)

- Penalty Term:

λ * Σ(βj²)for j=1 to p. - Effect: Shrinks coefficients toward zero but rarely sets them to exactly zero. It handles multicollinearity well by distributing weight among correlated features.

- Use Case: When most features are expected to have some small, non-zero effect. Commonly used in microbiome studies for de-noising and stabilizing predictions.

LASSO (Least Absolute Shrinkage and Selection Operator - L1 Regularization)

- Penalty Term:

λ * Σ|βj|for j=1 to p. - Effect: Can drive coefficients to exactly zero, performing automatic feature selection. This is crucial for identifying a sparse set of predictive microbial signatures.

- Limitation: With high-dimensional, correlated microbiome data (e.g., co-occurring microbial taxa), LASSO may arbitrarily select one feature from a correlated group.

Elastic Net (L1 + L2 Regularization)

- Penalty Term:

λ * [ α * Σ|βj| + (1-α)/2 * Σβj² ] - Effect: A convex combination of LASSO and Ridge. The

αparameter controls the mix (α=1is LASSO;α=0is Ridge). It selects variables like LASSO while encouraging grouping effects among correlated variables, a property highly suited for microbiome data where taxa belong to functional clusters. - Use Case: The preferred method for many microbiome analyses, balancing feature selection and model stability.

Quantitative Comparison of Regularization Techniques

Table 1: Comparison of Regularization Methods for Microbiome Data

| Feature / Method | Ridge Regression (L2) | LASSO (L1) | Elastic Net (L1+L2) |

|---|---|---|---|

| Penalty Type | L2 (Coefficient magnitude) | L1 (Coefficient absolute value) | Combined L1 & L2 |

| Feature Selection | No (Dense model) | Yes (Sparse model) | Yes (Sparse model) |

| Handles Correlation | Excellent | Poor (Selects one) | Good (Groups correlated features) |

| Solution Path | Stable, smooth | Variable, can be unstable | More stable than LASSO |

| Key Hyperparameter(s) | λ (Penalty strength) | λ (Penalty strength) | λ (Penalty strength), α (Mixing ratio) |

| Primary Microbiome Use | Prediction stability, de-noising | Identifying sparse signatures | Robust signature discovery with correlated taxa |

Table 2: Typical Hyperparameter Ranges for Microbiome Applications

| Parameter | Description | Common Search Range / Values | Optimization Advice |

|---|---|---|---|

| λ | Overall penalty strength | Log-spaced grid (e.g., 10^-4 to 10^2) | Use cross-validation (CV) to find optimal λ. |

| α | Mixing parameter (Elastic Net only) | [0, 0.1, 0.2, ..., 0.9, 1] | α=0.5-0.9 often works well for microbiome data. |

Experimental Protocol: A Standardized Workflow

Title: Regularized Regression for Microbiome Outcome Prediction

1. Preprocessing & Data Partitioning:

- Input: Normalized microbiome abundance matrix (e.g., from 16S rRNA or shotgun sequencing) and a clinical/continuous outcome vector.

- Normalization: Apply Centered Log-Ratio (CLR) or other compositional data transformation to address sparsity and compositionality.

- Split Data: Divide into Training (70%), Validation (15%), and hold-out Test (15%) sets. Stratify splits if outcome is categorical.

2. Model Training with Nested Cross-Validation (CV):

- Outer Loop (k=5): Assess model performance.

- Inner Loop (k=5): Hyperparameter tuning (λ for Ridge/LASSO; λ & α for Elastic Net).

- Procedure: For each outer fold, the inner CV searches the hyperparameter grid on the training subset. The best model is refit and evaluated on the outer validation fold.

3. Model Evaluation & Interpretation:

- Metrics: For continuous outcomes: Mean Squared Error (MSE), R². For binary outcomes: Area Under ROC Curve (AUC), Accuracy, F1-score.

- Feature Importance: Examine non-zero coefficients in LASSO/Elastic Net models. Validate selected taxa via biological plausibility and association tests.

Diagram: Regularized Modeling Workflow for Microbiome Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Toolkit for Regularized Analysis of Microbiome Data

| Item / Solution | Function / Purpose in Analysis |

|---|---|

R: glmnet package |

Industry-standard package for efficiently fitting LASSO, Ridge, and Elastic Net models via coordinate descent. |

Python: scikit-learn |

Provides Ridge, Lasso, and ElasticNet classes with integrated cross-validation (LassoCV, ElasticNetCV). |

| Compositional Data Transform (e.g., CLR) | Preprocessing method to handle the relative nature of sequencing data before regularization. |

| Nested Cross-Validation Script | Custom code or pipeline to implement nested CV, ensuring unbiased performance estimation and hyperparameter tuning. |

| High-Performance Computing (HPC) Cluster | For computationally intensive searches over large hyperparameter grids with high-dimensional data (p >> 10,000). |

| Feature Selection Validation Pipeline | Downstream bioinformatics tools (e.g., LEfSe, MaAsLin2) to cross-check selected microbial features for biological relevance. |

In the high-dimensional, correlated, and compositional context of microbiome research, Elastic Net regularization often provides the most pragmatic balance, offering the feature selection of LASSO with the grouping stability of Ridge. A rigorous nested cross-validation protocol is non-negotiable for obtaining reliable performance estimates and preventing overfitting. By integrating these regularization practices, researchers can distill complex microbial community data into robust, interpretable models that advance our understanding of host-microbiome interactions and accelerate translational discovery.

Research into microbial communities (microbiomes) is fundamentally challenged by high-dimensional data, where the number of measured features (e.g., microbial taxa or genes) vastly exceeds the number of samples. This dimensionality, inherent to sequencing-based studies, violates classical statistical assumptions, leading to spurious correlations, overfitting, and inflated false discovery rates. This whitepaper frames network analysis as a critical, yet nuanced, methodology for inferring meaningful ecological interactions—such as cooperation, competition, and commensalism—from this complex data landscape. Success hinges on rigorous preprocessing, robust statistical corrections, and validation strategies tailored to the p >> n problem.

Core Methodologies and Quantitative Comparisons

Network inference from abundance data relies on diverse algorithms, each with strengths and weaknesses for high-dimensional settings.

Table 1: Core Network Inference Methods for High-Dimensional Microbiome Data

| Method | Principle | Key Strength | Key Limitation for High-D Data | Common Implementation |

|---|---|---|---|---|

| Correlation-based | Pearson/Spearman correlation, SparCC, CCLasso | Computationally simple, intuitive. | Highly prone to spurious correlations from compositionality and outliers. | SparCC.py, ccrepe |

| Regularized Regression | GLM with L1/L2 penalty (e.g., gLasso, MInt) | Models conditional dependencies, controls for other taxa. | Sensitive to tuning parameter selection; assumes specific data distribution. | SPIEC-EASI, huge R package |

| Information-Theoretic | Mutual Information, ARACNE, MRNET | Captures non-linear relationships. | Requires reliable probability density estimation; computationally intensive. | minet R package, parmigene |

| Bayesian | Bayesian Graphical Models, Sparse Bayesian Networks | Incorporates prior knowledge, quantifies uncertainty. | Extremely computationally demanding with many nodes. | BDgraph R package |

| Machine Learning | Random Forest (e.g., GENIE3), Neural Networks | Model-free, captures complex interactions. | High risk of overfitting; results are often less interpretable. | GENIE3 R/Python |

Table 2: Comparative Performance Metrics (Synthetic Benchmark Data) Benchmark on simulated microbial community data with 200 taxa and 100 samples (n=50 simulations).

| Method | Precision (Mean ± SD) | Recall (Mean ± SD) | F1-Score (Mean ± SD) | Runtime (Seconds) |

|---|---|---|---|---|

| SparCC | 0.22 ± 0.05 | 0.65 ± 0.07 | 0.33 ± 0.05 | 45 |

| gLasso (SPIEC-EASI) | 0.71 ± 0.08 | 0.38 ± 0.06 | 0.50 ± 0.06 | 120 |

| ARACNE | 0.45 ± 0.07 | 0.52 ± 0.08 | 0.48 ± 0.06 | 310 |

| GENIE3 | 0.58 ± 0.09 | 0.55 ± 0.07 | 0.56 ± 0.06 | 890 |

Detailed Experimental Protocol: A Standard gLasso-Based Pipeline

Protocol Title: Inference of Microbial Interaction Networks from 16S rRNA Amplicon Data Using SPIEC-EASI.

I. Input Data Preparation & Normalization

- Sequence Processing: Process raw FASTQ files through DADA2 or QIIME2 to generate an Amplicon Sequence Variant (ASV) table. Remove singletons and ASVs present in <10% of samples.

- Compositional Transform: Apply a Center Log-Ratio (CLR) transformation to the count data. A pseudo-count of 1 is added to all counts before transformation:

CLR(x) = ln[x_i / g(x)], whereg(x)is the geometric mean of the vector. - Covariate Adjustment: Regress out potential confounders (e.g., pH, sequencing depth, host age) using a linear model. Use the residuals for network inference.

II. Network Inference with SPIEC-EASI (gLasso)

- Model Selection: Use the

SPIEC-EASIR package. Select themb(Meinshausen-Bühlmann) orglassomethod. - Stability Selection: Execute the model across 100 subsamples (e.g., 80% of data each) and a range of lambda (sparsity) parameters.

- Network Construction: Select the lambda parameter that maximizes the Stability Approach to Regularization Selection (StARS) criterion. The final network adjacency matrix is constructed from edges that appear in >90% of subsampled models.

III. Validation & Interpretation

- Topological Analysis: Calculate network properties (degree distribution, clustering coefficient, betweenness centrality) using the

igraphlibrary. - Permutation Testing: Generate 1000 randomized networks (null model) by permuting taxon labels. Compare observed edge weights and global properties to the null distribution to assess significance (p < 0.05).

- Visualization & Hub Identification: Visualize the network in Gephi or

Cytoscape. Identify hub taxa based on high centrality measures.

Diagram: Standard Network Inference Workflow

Title: Microbiome Network Analysis Pipeline

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Research Reagent Solutions for Microbiome Network Studies

| Item | Function & Rationale |

|---|---|

| ZymoBIOMICS Microbial Community Standards | Defined mock communities of known composition and abundance. Serves as a critical positive control to benchmark and validate network inference accuracy. |

| DNeasy PowerSoil Pro Kit (QIAGEN) | Gold-standard for high-yield, inhibitor-free microbial genomic DNA extraction from complex samples. Essential for generating consistent, high-quality input data. |

| Illumina NovaSeq 6000 Reagent Kits | Provides the high-throughput sequencing depth required to capture low-abundance taxa, increasing the effective dimensionality and richness of input data. |

| PhiX Control v3 | Sequencing run control for Illumina platforms. Monitors error rates, crucial for accurate ASV calling, which underpins all downstream network analysis. |

| SYNTAX Reference Databases (e.g., SILVA, GTDB) | Curated 16S/18S rRNA gene databases for precise taxonomic classification. Accurate node identity is fundamental for interpreting ecological interactions. |

R/Bioconductor Packages: phyloseq, SpiecEasi, igraph |

Integrated software toolkits for data handling, specific network inference algorithms, and network property calculation/visualization. |

Advanced Considerations: From Correlation to Causation

Correlative networks are a starting point. Emerging methods aim to infer directionality and causality.

- Time-Series & Dynamic Bayesian Networks: Analyzing longitudinal data via packages like

mDSIorpulsarcan suggest interaction directionality based on temporal precedence. - Integrative Multi-Omics Networks: Combining 16S, metagenomics, and metabolomics data (e.g., using

MMINPorMultiomicsR packages) constructs more mechanistic, multi-layer networks linking taxa to functions and metabolites. - In Silico Knock-Out Simulations: Using the inferred network as a scaffold for genome-scale metabolic modeling (e.g., with

MICOMorCarveMe) allows prediction of keystone species and system-level metabolic shifts upon perturbation.

Network analysis provides a powerful framework for distilling high-dimensional microbiome data into interpretable ecological hypotheses. However, within the thesis of high-dimensional challenges, it is paramount to remember that all inferred interactions are model-dependent and require cautious interpretation as potential, rather than proven, biological relationships. A robust pipeline integrating careful normalization, stability-based model selection, and rigorous statistical validation is non-negotiable for generating reliable insights applicable to fields like drug development, where modulating microbial interactions is an emerging therapeutic frontier.

Within the broader thesis on the challenges of high dimensionality in microbiome datasets, multi-omics integration emerges as a critical framework for deriving biological insight. The inherent complexity and scale of microbiome data—often comprising millions of taxonomic and functional features from thousands of samples—necessitate advanced computational strategies to link it with host genomics and metabolomics. This guide provides a technical roadmap for such integration, addressing dimensionality reduction, statistical reconciliation, and causal inference.

Core Challenges of High-Dimensionality in Integration

Integrating microbiome data with other omics layers amplifies the standard "large p, small n" problem. Key challenges include:

- Feature Heterogeneity: Taxonomic relative abundances (compositional), SNP matrices (discrete), and metabolite intensities (continuous) exist on different scales and distributions.

- Sparsity: Microbial count data is zero-inflated, complicuting correlation-based methods.

- Compositionality: Microbiome data sums to a constant (e.g., sequencing depth), creating false correlations.

- Biological Lag & Compartmentalization: Microbial metabolites may act distally from their production site, obscuring host-microbe interaction signals.

Methodological Frameworks & Experimental Protocols

Correlation-Based Network Analysis (Discovery Phase)

Protocol: Sparse Multivariate Methods (e.g., Sparse Canonical Correlation Analysis - sCCA)

- Step 1 – Preprocessing: For microbiome data (16S rRNA gene amplicon or shotgun metagenomic), apply center log-ratio (CLR) transformation after adding a pseudocount to address compositionality. For host genomics, use SNP dosages or polygenic risk scores. For metabolomics, apply log-transformation and quantile normalization.

- Step 2 – Dimensionality Reduction: Independently filter low-variance features in each omics layer (e.g., retain top 5,000 microbial species/genes, top 10,000 SNPs, top 1,000 metabolites).

- Step 3 – Integration: Apply sCCA (using

mixOmicsR package orsCCAin Python) to find linear combinations of features from two omics layers (e.g., microbiome and metabolome) that maximally covary. The L1 penalty induces sparsity, selecting a limited number of contributing features from each high-dimensional set. - Step 4 – Validation: Perform permutation testing (n=1000) to assess significance of the canonical correlations. Use bootstrapping to evaluate stability of selected features.

Model-Based Integration for Causal Inference (Hypothesis Testing)

Protocol: Mendelian Randomization (MR) with Microbiome as Exposure/Outcome

- Step 1 – Instrument Variable (IV) Selection: Identify host genetic variants (SNPs) robustly associated (p < 5e-08) with a specific microbial taxon's abundance (exposure) from a prior Genome-Wide Association Study (mGWAS).

- Step 2 – Association Extraction: From a separate cohort, extract the associations of the selected IVs with the host metabolomic trait of interest (outcome).

- Step 3 – Causal Estimation: Perform inverse-variance weighted (IVW) MR analysis to estimate the causal effect of the microbial taxon on the metabolite. Sensitivity analyses (MR-Egger, weighted median) must be conducted to test for pleiotropy.

- Step 4 – Reverse Causation Test: Repeat the MR framework with the metabolite as exposure (using metabolite-associated SNPs) and microbial feature as outcome to rule out reverse causality.

Unified Latent Space Modeling (Systems View)

Protocol: Multi-Omics Factor Analysis (MOFA/MOFA+)

- Step 1 – Data Input: Provide preprocessed matrices (microbiome CLR counts, host SNP dosages, metabolite intensities) as different "views."

- Step 2 – Model Training: The Bayesian framework infers a set of (e.g., 10-15) latent factors that capture shared and specific variations across all omics datasets. It naturally handles missing values.

- Step 3 – Factor Interpretation: Regress factor values against sample metadata (e.g., disease status) to interpret biological drivers. Annotate factors by loading weights to identify key microbial, genetic, and metabolic features contributing to each axis of variation.

- Step 4 – Downstream Prediction: Use the latent factors as low-dimensional covariates in regression models to predict clinical phenotypes, overcoming the original high dimensionality.

Table 1: Comparison of Multi-Omics Integration Methods for High-Dimensional Microbiome Data

| Method | Primary Use Case | Handles >2 Omics Layers | Key Strength | Typical Runtime | Major Software/Package |

|---|---|---|---|---|---|

| Sparse CCA (sCCA) | Pairwise correlation discovery | No | Feature selection via sparsity; interpretable | Minutes to Hours | mixOmics (R), sklearn (Python) |

| Mendelian Randomization (MR) | Causal inference | No | Provides evidence for causality using genetic instruments | Minutes | TwoSampleMR (R), MR-Base |

| MOFA+ | Unsupervised latent factor discovery | Yes | Handles missing data; identifies shared & unique variation | Hours | MOFA2 (R/Python) |

| Integrative NMF (iNMF) | Joint pattern discovery | Yes | Learns coherent patterns across omics; good for clustering | Hours | LIGER (R) |

| Structural Equation Modeling (SEM) | Path-based causal modeling | Yes | Tests complex a priori networks with latent variables | Hours to Days | lavaan (R) |

Table 2: Example Output from a Hypothetical sCCA Analysis Linking Microbiome and Metabolome

| Microbiome Feature (CLR) | Metabolite Feature (log) | Canonical Loading (Microbe) | Canonical Loading (Metabolite) | Permutation p-value |

|---|---|---|---|---|

| Faecalibacterium prausnitzii | Butyrate | 0.85 | 0.91 | 0.003 |

| Bacteroides vulgatus | Succinate | 0.72 | -0.65 | 0.012 |

| Escherichia coli | Indoxyl Sulfate | 0.61 | 0.58 | 0.021 |

| Bifidobacterium longum | Acetate | 0.55 | 0.49 | 0.047 |

Visualizing Relationships and Workflows

Title: Multi-Omics Integration Computational Workflow

Title: Host-Gene-Microbe-Metabolite Signaling Pathway

The Scientist's Toolkit: Research Reagent & Solution Guide

Table 3: Essential Materials for Multi-Omics Integration Studies

| Item | Category | Function & Rationale |

|---|---|---|

| Stool DNA Stabilization Buffer (e.g., OMNIgene•GUT) | Sample Collection | Preserves microbial genomic DNA at ambient temperature, minimizing taxonomic bias from overgrowth. |

| PAXgene Blood RNA/DNA Tubes | Sample Collection | Simultaneously stabilizes host genomic DNA and RNA from blood for host transcriptomic/genomic analysis. |

| Quenching Solution (e.g., Cold Methanol) | Metabolomics | Rapidly halts metabolic activity in fecal/plasma samples to capture an accurate metabolic snapshot. |

| Internal Standard Mix (e.g., for LC-MS Metabolomics) | Metabolomics | A cocktail of stable isotope-labeled metabolites for absolute quantification and LC-MS performance monitoring. |

| Mock Microbial Community (e.g., ZymoBIOMICS) | Sequencing Control | Defined mixture of microbial genomes to assess bias and error in metagenomic wet-lab and bioinformatic pipelines. |

| Human Genomic DNA Standard (e.g., NIST RM 8398) | Genomics Control | Reference material for calibrating host genotyping arrays or sequencing assays. |

| Biocrates AbsoluteIDQ p400 HR Kit | Targeted Metabolomics | Validated kit for quantitative profiling of ~400 metabolites across key pathways, ensuring reproducibility. |

| Cloud Computing Credits (AWS, GCP) | Computational | Essential for scalable processing of high-dimensional datasets and running intensive integration algorithms. |

The study of microbial communities through sequencing generates data of extreme high dimensionality, characterized by thousands of operational taxonomic units (OTUs), amplicon sequence variants (ASVs), or functional pathways per sample, often with sample sizes orders of magnitude smaller. This "high p, low n" problem is the central challenge in microbiome data science, leading to overfitting, spurious correlations, and reduced statistical power. This whitepaper explores emerging deep learning (DL) architectures specifically designed to overcome these challenges by learning hierarchical representations, capturing non-linear interactions, and integrating multi-omic data to recognize robust biological patterns for therapeutic and diagnostic applications.

Core Deep Learning Architectures for High-Dimensional Microbiome Data